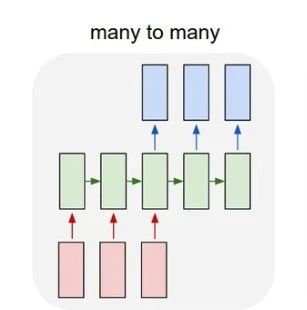

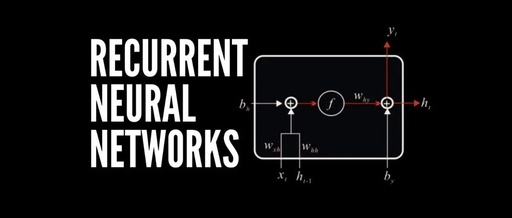

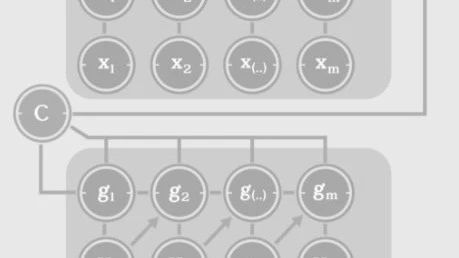

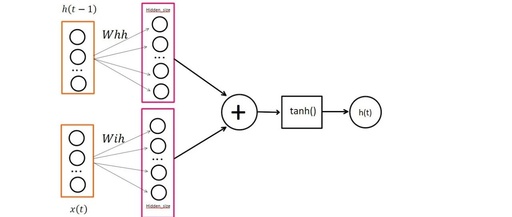

Discussing RNN Gradient Vanishing/Explosion Issues

More Reading #Submission Guidelines# Get Your Paper Seen by More People How can we ensure that more quality content reaches readers quickly and reduces their search costs for high-quality content? The answer is: people you don’t know. There are always some people you don’t know who know what you want to know. PaperWeekly may serve … Read more