How Attention Mechanism Enhances GAN Image Quality

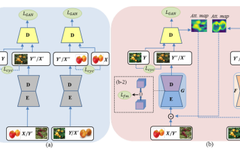

| Previous Work The papers Unsupervised attention-guided image-to-image translation and Attention-GAN for Object Translation in Wild Images have studied the combination of attention mechanisms and GANs, but both use attention to separate the foreground and background. The main approach is: To split the generator network into two parts, where the first part is the prediction … Read more