A Detailed Explanation of RNN Stock Prediction (Python Code)

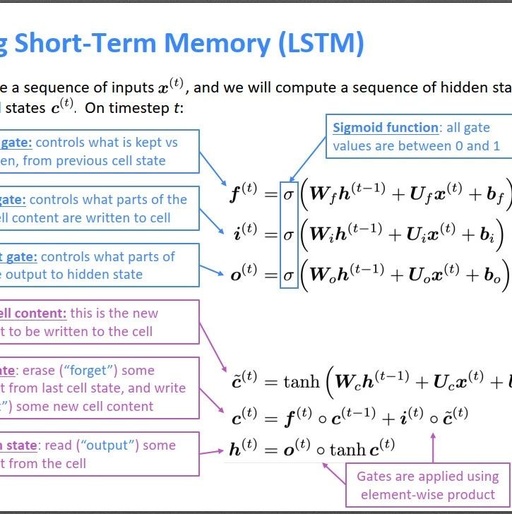

Recurrent Neural Networks (RNN) are designed based on the recursive nature of sequential data (such as language, speech, and time series) and are a type of feedback neural network that contains loops and self-repetitions, hence the name “recurrent”. They are specifically used to handle sequential data, such as generating text word by word or predicting … Read more