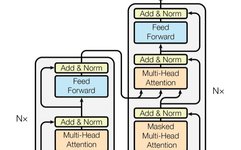

Introduction to Attention Mechanisms in Three Transformer Models and PyTorch Implementation

This article delves into three key attention mechanisms in Transformer models: self-attention, cross-attention, and causal self-attention. These mechanisms are core components of large language models (LLMs) like GPT-4 and Llama. By understanding these attention mechanisms, we can better grasp how these models work and their potential applications. We will discuss not only the theoretical concepts … Read more