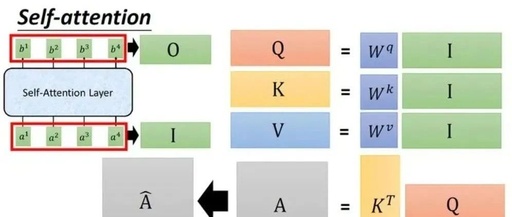

Understanding Q, K, and V in Attention Mechanisms

Question: I have searched various materials and read the original papers, which detail how Q, K, and V are obtained through certain operations to derive output results. However, I have not found any explanation of where Q, K, and V come from. Isn’t the input to a layer just a tensor? Why do we have … Read more