If you like it, please follow CV Circle !

The attention mechanism has become very popular in recent years. So, what are the origins and current developments of the attention mechanism? Let’s follow the author, Mr. Haohao, and take a look.

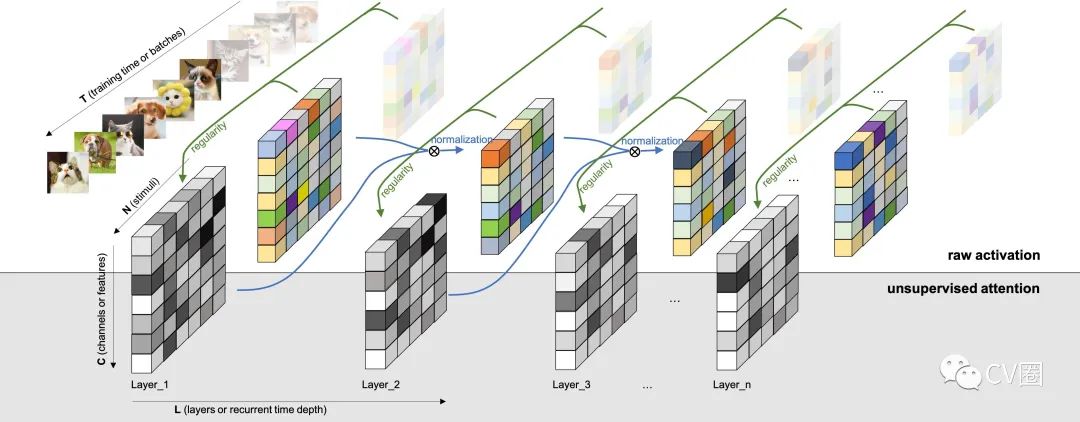

1 Background Knowledge

2 Principles and Classification of Attention Mechanism

2.1 Principles of Attention Mechanism

2.2 Classification of Attention Mechanism

-

Soft Attention Mechanism. It can be divided into item-wise soft attention and location-wise soft attention.

-

Hard Attention Mechanism. It can be divided into item-wise hard attention and location-wise hard attention.

-

Self-Attention Mechanism. This is a variant of the attention mechanism that reduces dependence on external information and is better at capturing internal correlations of data or features. The application of self-attention in text mainly solves the long-distance dependency problem by calculating the mutual influence between words.

3 Soft Attention Mechanism (soft-attention)

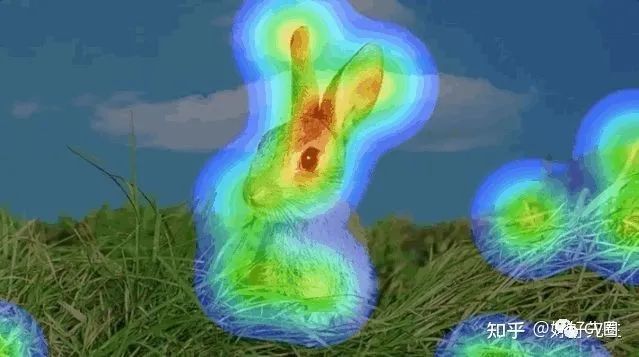

3.1 Neural Image Caption Generation with Visual Attention

Show, Attend and Tell: Neural Image Caption Generation with Visual Attention Edit

-

Main Idea: Inspired by the latest work in machine translation and object detection, the article introduces a model based on attention that can automatically learn to describe the content of images. The article describes how to use standard backpropagation techniques to deterministically and randomly train the model by maximizing the variational lower bound. The article also visualizes how the model can automatically learn to fix attention on salient objects while generating corresponding words in the output sequence. The attention usage is validated through the latest performance on three benchmark datasets: Flickr8k, Flickr30k, and MS COCO.

-

Paper Link: https://arxiv.org/pdf/1502.03044v3.pdf

-

Code Link: https://github.com/sgrvinod/a-PyTorch-Tutorial-to-Image-Captioning

3.2 Action Recognition using Visual Attention

Action Recognition using Visual Attention

-

Main Idea: For the task of action recognition in videos, the article proposes a model based on soft attention. The article uses a multi-layer recurrent neural network (RNN) with long short-term memory (LSTM) units, which are deep in both spatial and temporal dimensions. The model learns to selectively focus on various parts of video frames and classifies the video after a brief glance. The model inherently understands which parts of the frame are relevant to the task at hand and assigns greater importance to them. The model is evaluated on the UCF-11 (YouTube actions), HMDB-51, and Hollywood2 datasets, analyzing how the model focuses attention based on scenes and actions performed.

-

Paper Link: https://arxiv.org/pdf/1511.04119v3.pdf

-

Code Link: https://github.com/kracwarlock/action-recognition-visual-attention

3.3 Describing Videos by Exploiting Temporal Structure

Describing Videos by Exploiting Temporal Structure

-

Main Idea: The latest advances in using recurrent neural networks (RNN) for image description have inspired exploration of their application in video description. However, while images are static, processing videos requires modeling their dynamic temporal structure and correctly integrating this information into natural language descriptions. In this case, a method is proposed that successfully considers both local and global temporal structures of videos to generate descriptions. First, the method combines spatio-temporal 3-D convolutional neural network (3-D CNN) representations of short-term dynamics. The training of the 3-D CNN representation is aimed at generating representations suitable for human actions and behaviors. Second, the article proposes a temporal attention mechanism that transcends local temporal modeling and learns to automatically select the most relevant time segments given the RNN generating the text.

-

Paper Link: https://arxiv.org/pdf/1502.08029v5.pdf

-

Code Link: https://github.com/yaoli/arctic-capgen-vid

3.4 Attention-Gated Networks for Improving Ultrasound Scan Plane Detection

Attention-Gated Networks for Improving Ultrasound Scan Plane Detection

-

Main Idea: In this work, attention-gated networks are applied to real-time automatic scan plane detection for fetal ultrasound screening. Scan plane detection in fetal ultrasound is a challenging problem due to poor image quality, leading to a lack of interpretability for clinicians and automated algorithms. To address this issue, the article suggests combining a self-gated soft attention mechanism. The soft attention mechanism generates an end-to-end trainable gating signal, allowing the network to associate local information useful for predictions. The proposed attention mechanism is general and can be easily incorporated into any existing classification architecture with only a few additional parameters. The article shows that when the underlying network has high capacity, the combined attention mechanism can improve overall performance while providing effective object localization. When the capacity of the underlying network is low, this approach significantly outperforms baseline methods and greatly reduces false positive rates.

-

Paper Link: https://arxiv.org/pdf/1804.05338v1.pdf

-

Code Link: https://github.com/ozan-oktay/Attention-Gated-Networks

3.5 Adaptive Physics-Informed Neural Networks with Soft Attention Mechanism

-

Main Idea: A fundamentally new method is proposed to adaptively train PINNs, where the adaptive weights are fully trainable, allowing the neural network to understand which regions of the solution are difficult and forced to focus on those areas, reminiscent of the multiplicative mask attention mechanism used in soft computer vision. The basic idea of these adaptive PINNs is to increase weights where the corresponding losses are high, achieved by training the network to minimize losses while maximizing weights (i.e., finding saddle points on the cost surface). The article shows that this is formally equivalent to using penalty-based methods to solve optimization problems subject to PDE constraints, even though the monotonic non-decreasing penalty coefficients are trainable in a certain sense. In numerical experiments using the Allen-Cahn rigid PDE, the adaptive PINN outperforms other state-of-the-art PINN algorithms in terms of L2 error while using fewer training iterations. The appendix contains additional results for Burger’s and Helmholtz PDEs, confirming the trends observed in the Allen-Cahn experiments. Finding saddle points on the cost surface is shown to be formally equivalent to using penalty-based methods to solve optimization problems subject to PDE constraints, even though the monotonic non-decreasing penalty coefficients are trainable in a certain sense. In numerical experiments using the Allen-Cahn rigid PDE, the adaptive PINN outperforms other state-of-the-art PINN algorithms in terms of L2 error while using fewer training iterations. The appendix contains additional results for Burger’s and Helmholtz PDEs, confirming the trends observed in the Allen-Cahn experiments.

-

Paper Link: https://arxiv.org/pdf/2009.04544v2.pdf

-

Code Link: https://github.com/levimcclenny/SA-PINNs

3.6 Recurrent Models of Visual Attention

Recurrent Models of Visual Attention

-

Main Idea: Applying convolutional neural networks to large images is computationally expensive, as the computation scales linearly with the number of image pixels. The article proposes a novel recurrent neural network model that can extract information from images or videos by adaptively selecting sequences of regions or positions and processing only the selected regions at high resolution. Like convolutional neural networks, the proposed model has a built-in degree of translational invariance but can control the amount of computation performed independently of the input image size. Although the model is non-differentiable, task-specific strategies can be learned using reinforcement learning methods. The article evaluates the model on several image classification tasks, where it significantly outperforms convolutional neural network baselines on cluttered images, and on dynamic visual control tasks, the model learns to track simple objects without explicit training signals.

-

Paper Link: https://arxiv.org/pdf/1406.6247v1.pdf

-

Code Link: https://github.com/kevinzakka/recurrent-visual-attention

4 Hard Attention Mechanism (hard-attention)

4.1 Attention-based Extraction of Structured Information from Street View Imagery

Attention-based Extraction of Structured Information from Street View Imagery

-

Main Idea: The article proposes a neural network model based on CNN, RNN, and a novel attention mechanism that achieves an accuracy of 84.2% on the challenging French Street Name Signs (FSNS) dataset, significantly outperforming the previous state-of-the-art (Smith’16) which reached 72.46%. Moreover, the article’s new method is simpler and more general than previous methods. To demonstrate the generality of the model, the article shows that it also performs well on a more challenging dataset sourced from Google Street View, aimed at extracting merchant names from storefronts. Lastly, the article investigates the speed/accuracy trade-off caused by using CNN feature extractors of different depths. Surprisingly, the article finds that deeper layers are not always better (in terms of accuracy and speed). The model generated by the article is simple, accurate, and fast, making it suitable for large-scale use in various challenging real-world text extraction problems.

-

Paper Link: https://arxiv.org/pdf/1704.03549v4.pdf

-

Code Link: https://github.com/tensorflow/models

4.2 Hard Non-Monotonic Attention for Character-Level Transduction

Hard Non-Monotonic Attention for Character-Level Transduction

-

Main Idea: Character-level string-to-string transduction is an important component of various NLP tasks. The goal is to map input strings to output strings, where these strings may differ in length and have characters drawn from different alphabets. Recent methods have used sequence-to-sequence models with attention mechanisms to understand which parts of the input string the model should focus on during the generation of the output string. Soft attention and hard monotonic attention have been used, but hard non-monotonic attention has only been used in other sequence modeling tasks, such as image captioning, and requires random approximations to compute gradients. In this work, the article introduces an exact polynomial-time algorithm to marginalize the exponential number of non-monotonic alignments between two strings, indicating that the hard attention model can be viewed as a neural reparameterization of the classical IBM Model 1.

-

Paper Link: https://arxiv.org/pdf/1808.10024v2.pdf

-

Code Link: https://github.com/shijie-wu/neural-transducer

4.3 Saccader: Improving Accuracy of Hard Attention Models for Vision

Saccader: Improving Accuracy of Hard Attention Models for Vision

-

Main Idea: A novel hard attention model called Saccader is proposed. The key to Saccader is a pre-training step that requires only class labels and provides initial attention locations for policy gradient optimization. The best model of the article narrows the gap with the general ImageNet benchmark, achieving 75% top-1 and 91% top-5 accuracy while focusing on less than one-third of the images.

-

Paper Link: https://arxiv.org/pdf/1908.07644v3.pdf

-

Code Link: https://github.com/google-research/google-research

4.4 Overcoming catastrophic forgetting with hard attention to the task

Overcoming catastrophic forgetting with hard attention to the task

-

Main Idea: Catastrophic forgetting occurs when neural networks lose information learned in previous tasks after training on subsequent tasks. This issue remains a barrier for AI systems with sequential learning capabilities. In this paper, the article proposes a task-based hard attention mechanism that retains information from previous tasks without affecting the learning of the current task. Hard attention masks can be learned simultaneously for each task through stochastic gradient descent, and previous masks can be utilized to modulate this learning. The article demonstrates that the proposed mechanism effectively reduces catastrophic forgetting, lowering the current rate by 45% to 80%. The article also shows that it is robust to different hyperparameter choices and offers many monitoring features. The method has the potential to control the stability and compactness of learned knowledge, which the article suggests is also appealing for online learning or network compression applications.

-

Paper Link: https://arxiv.org/pdf/1801.01423v3.pdf

-

Code Link: https://github.com/joansj/hat

5 Self-Attention Mechanism (self-attention)

5.1 Reinforced Self-Attention Network: a Hybrid of Hard and Soft Attention for Sequence Modeling

Main Idea: The article integrates soft attention and hard attention into a context fusion model called “Reinforced Self-Attention (ReSA)” to achieve mutual promotion. In ReSA, hard attention prunes a sequence for soft self-attention processing, while soft attention provides feedback reward signals to facilitate hard attention training. To this end, the article develops a novel hard attention called “Reinforced Sequence Sampling (RSS)” that selects tokens in parallel and is trained via policy gradients. Using two RSS modules, ReSA effectively extracts sparse dependencies between each pair of selected tokens. Finally, the article proposes a sentence encoding model fully based on ReSA without RNN/CNN, called “Reinforced Self-Attention Network (ReSAN)”. It achieves state-of-the-art performance on the Stanford Natural Language Inference (SNLI) and Sentence Involving Compositional Knowledge (SICK) datasets.

Paper Link: https://arxiv.org/pdf/1801.10296v2.pdf

Code Link: https://github.com/taoshen58/DiSAN

5.2 Attention Is All You Need

Attention Is All You Need

-

Main Idea: Dominant sequence transduction models are based on complex recurrent or convolutional neural networks in encoder-decoder configurations. The best-performing models connect the encoder and decoder through attention mechanisms. The article proposes a new simple network architecture called the Transformer, which is entirely based on attention mechanisms, eliminating recurrence and convolution altogether. Experiments conducted on two machine translation tasks show that these models outperform in quality while offering higher parallelism and significantly less training time. The article’s model achieved a BLEU score of 28.4 on the WMT 2014 English-German translation task, improving by 2 BLEU over the previous best results (including ensembles). On the 2014 WMT English-French translation task, the article’s model established a new single-model state-of-the-art BLEU score of 41.8 after 3.5 days of training on eight GPUs.

-

Paper Link: https://arxiv.org/pdf/1706.03762v5.pdf

-

Code Link: https://github.com/tensorflow/tensor2tensor

5.3 A Neural Attention Model for Abstractive Sentence Summarization

A Neural Attention Model for Abstractive Sentence Summarization

-

Main Idea: Extractive summarization based on text is inherently limited, but generative abstract methods are difficult to construct. In this work, the article proposes a fully data-driven abstractive sentence summarization method. The article’s method leverages a self-attention-based model that generates each word of the summary conditioned on the input sentence. Despite its structural simplicity, it can be easily trained end-to-end and can scale to large amounts of training data. The model shows significant performance improvements on the DUC-2004 shared task compared to several strong baselines.

-

Paper Link: https://arxiv.org/pdf/1509.00685v2.pdf

-

Code Link: https://github.com/toru34/rushemnlp2015

5.4 Neural Machine Translation by Jointly Learning to Align and Translate

Neural Machine Translation by Jointly Learning to Align and Translate

-

Main Idea: Neural machine translation is a recently proposed method for machine translation. Unlike traditional statistical machine translation, neural machine translation aims to construct a single neural network that can be jointly adjusted to maximize translation performance. Recently proposed models for neural machine translation typically belong to the encoder-decoder family and consist of an encoder that encodes the source sentence into a fixed-length vector, and a decoder that generates the translation based on this fixed-length vector. In this paper, the article hypothesizes that using a fixed-length vector is a bottleneck for improving the performance of this basic encoder-decoder architecture and suggests expanding this scope by allowing the model to automatically (softly) search for parts of the source sentence that are relevant to predicting the target word without having to explicitly form these parts into a difficult alignment.

-

Paper Link: https://arxiv.org/pdf/1409.0473v7.pdf

-

Code Link: https://github.com/graykode/nlp-tutorial

5.5 Self-Attention with Relative Position Representations

Self-Attention with Relative Position Representations

-

Main Idea: The Transformer introduced by Vaswani et al. (2017) relies entirely on attention mechanisms and achieved state-of-the-art results in machine translation. Unlike recurrent and convolutional neural networks, it does not explicitly model relative or absolute positional information in its architecture. Instead, it requires the addition of absolute position representations in its inputs. In this work, the article proposes an alternative approach that extends the self-attention mechanism to effectively consider relative position representations or the distance between sequence elements. In WMT 2014 English-German and English-French translation tasks, this approach improved by 1.3 BLEU and 0.3 BLEU, respectively, compared to absolute position representations. Notably, the article observes that combining relative and absolute position representations does not further improve translation quality. The article describes an efficient implementation of the proposed method and translates it into an instance of relation-aware self-attention mechanisms that can be generalized to arbitrarily labeled inputs.

-

Paper Link: https://arxiv.org/pdf/1803.02155v2.pdf

-

Code Link: https://github.com/tensorflow/tensor2tensor

5.6 A Structured Self-attentive Sentence Embedding

A Structured Self-attentive Sentence Embedding

-

Main Idea: This article proposes a new model for extracting interpretable sentence embeddings by introducing self-attention. Instead of using vectors, the article uses a two-dimensional matrix to represent embeddings, where each row corresponds to different parts of the sentence. The article also proposes a self-attention mechanism for this model and a special regularization term. As a side effect, the embeddings offer an intuitive way to visualize which specific parts of the sentence are encoded into the embeddings. The article evaluates the model on three different tasks: author profiling analysis, sentiment classification, and textual entailment. Results show that the model achieves significant performance improvements compared to other sentence embedding methods across all three tasks.

-

Paper Link: https://arxiv.org/pdf/1703.03130v1.pdf

-

Code Link: https://github.com/facebookresearch/pytext