Introduction

2024 is set to be a year of full bloom for AI Large Models, with major companies releasing their own large model application products, such as:

-

• Tencent’s Yuanbao -

• Alibaba’s Tongyi Qianwen -

• Byte’s Doubao -

• Baidu’s Wenxiaoyan -

• The Dark Side of the Moon’s Kimi -

• And many more

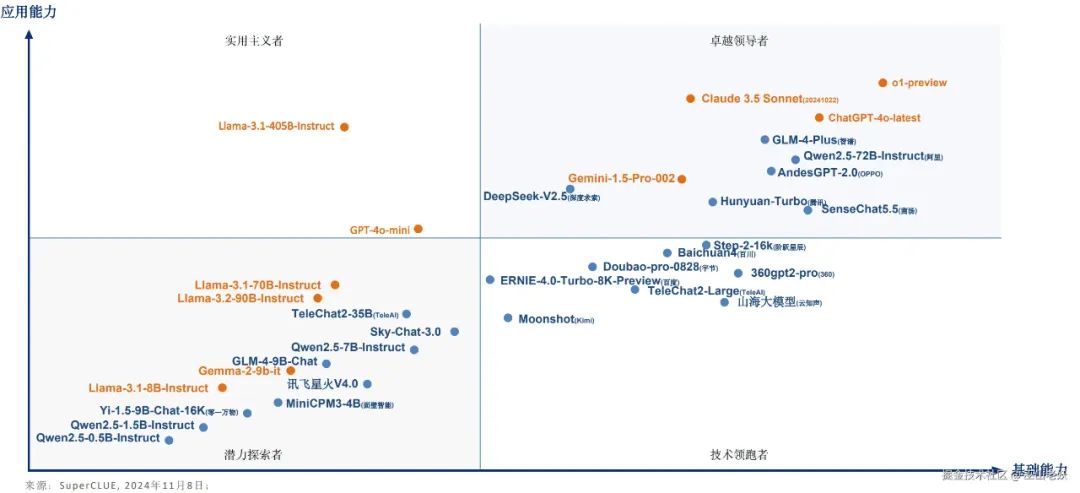

It’s a dazzling array, indeed. Here is a panoramic view:

Image source: https://www.cluebenchmarks.com/

Many people at the forefront of technology have already tried to integrate AI into various aspects of their lives and work, such as office work, coding, writing, and searching, all of which can be enhanced by AI tools. Once you experience the efficiency brought by AI, you will find it indispensable; it will become a powerful assistant in your work and life.

However, as someone working in the internet industry, in addition to learning to use AI tools to enhance our work efficiency, I am also interested in the technologies that support it. This article serves as a popular science piece to understand the key technical points behind these AI applications, facilitating our understanding of the current trends in the development of AI large models.

LLM

Large Language Model (LLM) is the foundation of current AI applications. Without it, there would be no technological revolution in AI.

LLM is a type of artificial intelligence model based on deep learning, used for various tasks involving natural language. They typically consist of neural networks with billions to trillions of parameters, trained on vast amounts of text data to learn the grammar, semantics, and contextual information of language, enabling them to understand and generate natural language text.

Characteristics

-

1. Massive Scale: LLMs usually have a massive parameter scale, reaching billions or even hundreds of billions of parameters, allowing them to capture more language knowledge and complex grammatical structures. -

2. Pre-training and Fine-tuning: LLMs adopt a learning method of pre-training and fine-tuning. They first pre-train on large-scale text data to learn general language representations and knowledge, then fine-tune to adapt to specific tasks, performing excellently in various NLP tasks. -

3. Context Awareness: LLMs have powerful context awareness capabilities when processing text, enabling them to understand and generate text content that relies on previous content. -

4. Multilingual Support: LLMs can be used for multiple languages, not limited to English. Their multilingual capabilities make cross-cultural and cross-language applications easier. -

5. Multimodal Support: Some LLMs have expanded to support multimodal data, including text, images, and speech. This means they can understand and generate content across different media types, enabling more diverse applications. -

6. Emergent Abilities: LLMs exhibit surprising emergent abilities, which are performance improvements that appear in large-scale models but are not evident in smaller models.

Application Prospects

LLMs have been widely applied in text generation, automatic translation, information retrieval, summary generation, chatbots, virtual assistants, and many other fields, profoundly impacting people’s daily lives and work. With continuous technological development, large language models will play an even greater role in the future.

Training Methods

Training a language model requires providing it with a large amount of text data, which the model uses to learn the structure, grammar, and semantics of human language. This process is typically completed through unsupervised learning, using a technique called self-supervised learning. In self-supervised learning, the model generates its own labels for the input data by predicting the next word or token in a sequence based on the preceding words.

Technical Architecture

LLMs are typically based on deep learning architectures, such as the Transformer, which helps them achieve impressive performance in various NLP tasks. The Transformer architecture consists of encoders and decoders that process data through a self-attention mechanism, discovering relationships between tokens.

As a core technology in the field of natural language processing, LLMs are continuously driving the development of artificial intelligence, with vast potential and application prospects.

Domestic and international AI large model quadrants:

From https://www.cluebenchmarks.com/superclue_2410

GPT

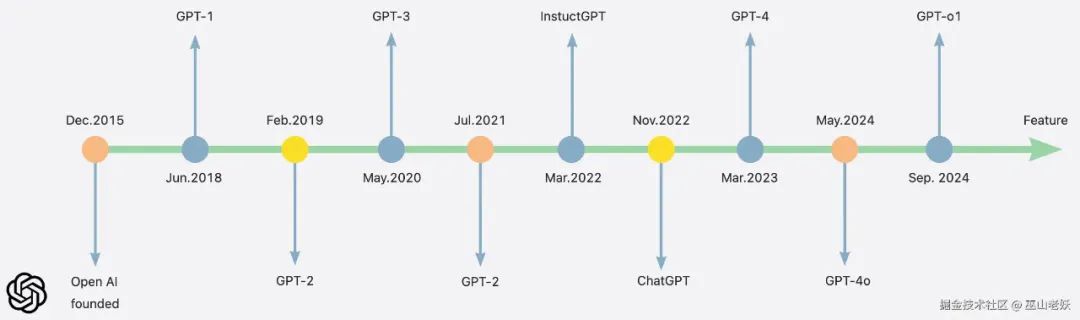

The GPT (Generative Pre-trained Transformer) series is a series of large language models developed by OpenAI, which have made significant progress in the field of natural language processing (NLP). Here is the development timeline of the GPT series:

-

1. GPT-1 (2018):

-

• GPT-1 is the first model in the series, based on the Transformer architecture, with 117 million parameters. GPT-1 primarily relies on unsupervised learning, demonstrating effectiveness in various NLP tasks through a combination of pre-training and fine-tuning.

-

• The parameter count of GPT-2 increased to 1.5 billion, showcasing powerful text generation capabilities. Due to concerns about potential misuse, OpenAI initially did not fully release the model, gradually opening access under public pressure.

-

• GPT-3 has a parameter count of 175 billion, becoming the largest language model at that time. With its outstanding text generation and context understanding capabilities, GPT-3 quickly sparked widespread application and research interest.

-

• At the end of 2022, OpenAI launched ChatGPT, based on the GPT-3.5 model, which was made publicly available as a free research preview. ChatGPT is known for its conversational abilities, capable of generating coherent and relevant text responses.

-

• OpenAI released GPT-4 on March 14, 2023, the latest model in the GPT series. GPT-4 contains 1.76 trillion parameters and can process up to 25,000 words simultaneously, which is eight times the capacity of GPT-3. GPT-4 has improved in reducing hallucinations compared to previous versions and can accept both text and image prompts, allowing users to define tasks in visual and linguistic domains.

-

• GPT-4o (with “o” representing “omni”) can process and generate text, images, and audio, achieving full integration of text, visual, and audio, becoming a native multimodal model. GPT-4o supports real-time voice interaction, providing a more human-like experience. Additionally, it has improved document handling capabilities, performance, and structured output.

-

• The GPT-o1 model was released by OpenAI on September 13, 2024. This model marks a significant advancement in artificial intelligence’s ability to handle complex reasoning tasks, referred to by OpenAI as the “beginning of a new paradigm.” The release of GPT-o1 demonstrates significant improvements across various subjects, including mathematics, physics, chemistry, and economics, especially excelling in solving doctoral-level physics problems compared to the previous GPT-4o model..

-

• OpenAI plans to release GPT-5, aiming to provide better personalization, more diverse and accurate responses, and enhanced creativity.

The development of the GPT series not only enhances AI’s abilities to understand and generate human language but also sparks discussions about the ethical and social implications of these technologies. With each iteration of the model, the GPT series continues to set new benchmarks in the field of NLP, and its applications are expanding across various domains, from text completion to story generation.

AIGC

AIGC (Artificial Intelligence Generated Content) is a new type of content production method that utilizes generative artificial intelligence technology to automatically create text, images, videos, and other content.

The AI applications mentioned in our introduction are essentially the real-world scenarios of AIGC, which relies on technologies like LLM to generate content. LLMs can generate various forms of content, such as articles, stories, and code, making them a core component of AIGC technology.

Compared to the familiar UGC (User Generated Content) and PGC (Professionally Generated Content), the emergence of AIGC will bring significant changes and advancements to content creation.

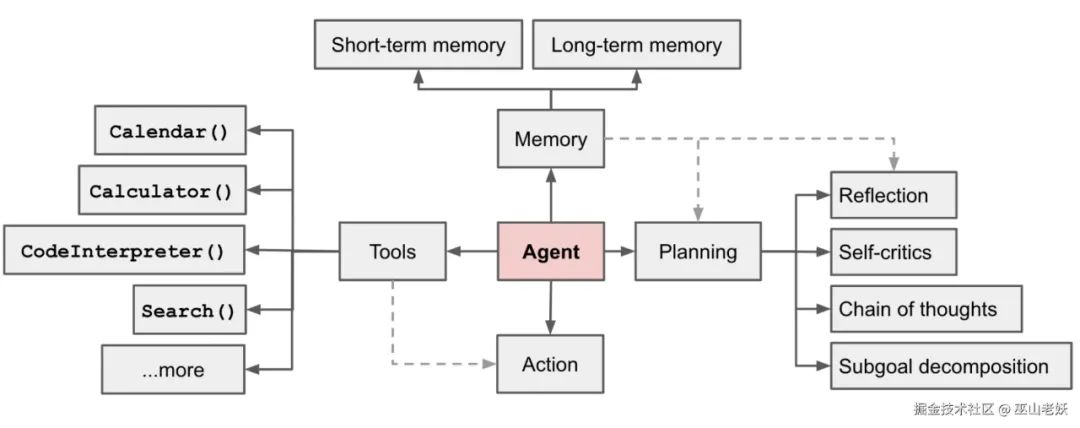

AI Agent

AI Agent refers to an intelligent entity capable of perceiving its environment, autonomously understanding, making decisions, and executing actions. It is based on large language models (LLMs) and possesses the ability to autonomously understand perception, planning, memory, and tool usage, enabling it to automate the execution of complex tasks.

The architecture diagram of the intelligent agent is as follows:

Source: https://lilianweng.github.io/posts/2023-06-23-agent/

Core components of LLM Agent:

-

• Planning: Using LLM to decompose tasks, breaking down user questions into multiple sub-questions. -

• Memory: Short-term and long-term memory, where short-term memory refers to the context of the LLM, and long-term memory refers to external vector storage. -

• Tool: Various tools, such as Google Search API, calculators. -

• Action: The action module is the part where the agent executes decisions or responses. For different tasks, the agent system has a complete set of action strategies, allowing it to choose the actions to be executed, such as the well-known memory retrieval, reasoning, learning, programming, etc.

An overview of some outstanding AI Agents

Image source: https://github.com/e2b-dev/awesome-ai-agents

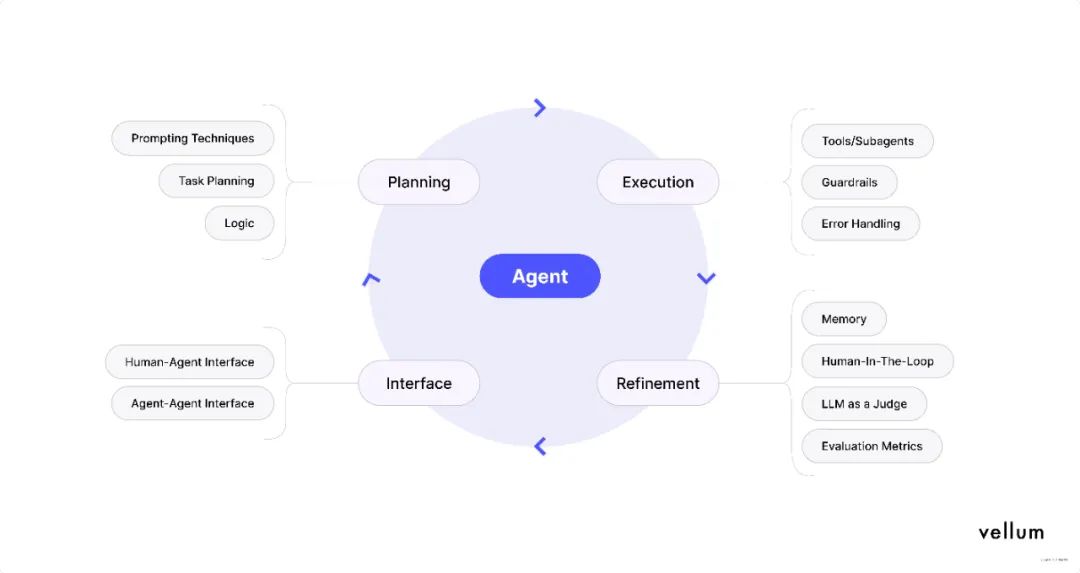

Agentic AI

Agentic AI emphasizes the autonomy and agency of AI, meaning that AI systems can complete tasks independently without direct human intervention. AI Agents are key to achieving Agentic AI, and LLMs provide the capabilities for AI Agents to process language and understand their environment.

Components of Agentic Workflow:

Image source: https://www.vellum.ai/blog/agentic-workflows-emerging-architectures-and-design-patterns

Some key features:

-

1. Autonomy: Agentic AI systems can operate without direct human intervention. They can independently identify problems, devise solutions, and execute those solutions. -

2. Social Ability: These systems can interact and communicate with other agents (whether human or other AI systems) to collaborate on tasks. -

3. Reactivity: Agentic AI can perceive its environment and respond quickly to changes. They can adjust their behavior based on external events and changes. -

4. Pro-activeness: In addition to reacting to changes in the environment, Agentic AI can also take proactive actions to achieve its designed goals, even anticipating future needs or problems. -

5. Reasoning: These systems possess logical reasoning abilities, enabling them to make decisions based on available information and predict the potential outcomes of their actions. -

6. Learning: Agentic AI systems can learn from experience and improve their performance and efficiency over time. -

7. Personalization: They can adjust based on user behavior and preferences to provide more customized services. -

8. Adaptability: Agentic AI systems can adapt to changing conditions and demands, flexibly adjusting strategies to maintain effectiveness. -

9. Transparency: Although Agentic AI systems can operate independently, they are often designed with transparency to allow humans to understand and track their decision-making processes. -

10. Ethics and Compliance: Agentic AI systems consider ethical and legal frameworks in their design, ensuring that their behavior aligns with social norms and regulations.

The application range of Agentic AI is very broad, from automated customer service and smart home control to self-driving cars and complex business process management. With technological advancements, Agentic AI systems are becoming increasingly complex and intelligent, playing an increasingly important role in improving efficiency, optimizing decision-making, and enhancing user experience.

Conclusion

-

• LLM is the foundational technology among these concepts, providing the ability to understand and generate natural language for others. -

• ChatGPT is a specific application of LLM, focusing on conversational systems. -

• AIGC relies on technologies like LLM to generate content. -

• AI Agent is an advanced application of LLM, combining other technologies to achieve more complex tasks. -

• Agentic AI is the current direction of development, emphasizing autonomy and agency, with AI Agents being key to achieving this goal.

Final Thoughts

In organizing this article, I am also struck by the rapid changes in technology. Since OpenAI released ChatGPT, breakthroughs that challenge our understanding have occurred almost every few months. However, what impact these developments have on ordinary people is something we need to engage with and ponder. The future is here; we need to approach the changes in the world with a more open and inclusive mindset. If we can’t beat them, let’s join them.