How Does Tesla Achieve City Autonomous Driving with Cameras?

Recently, Tesla updated its autonomous driving software to version 2020.12, which includes the automatic recognition of traffic lights and stop signs. If Tesla is equipped with the FSD full self-driving capability package, it can experience the autonomous driving feature of stopping at red lights and accelerating automatically when the green light is on through OTA upgrades.

This feature’s official release lays the groundwork for the upcoming Tesla city road autonomous driving and marks another step towards Tesla’s goal of “fully autonomous driving”.

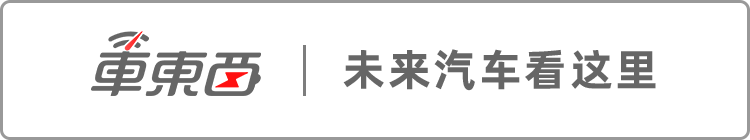

▲ Tesla’s traffic light recognition feature

Recognizing traffic lights and stop signs is not easy. While it is straightforward for drivers, it poses a significant challenge for machines that are not yet very intelligent. For example, different lanes have different traffic lights, and stop signs come in various forms and can be obscured, which tests the efficiency and accuracy of machine recognition.

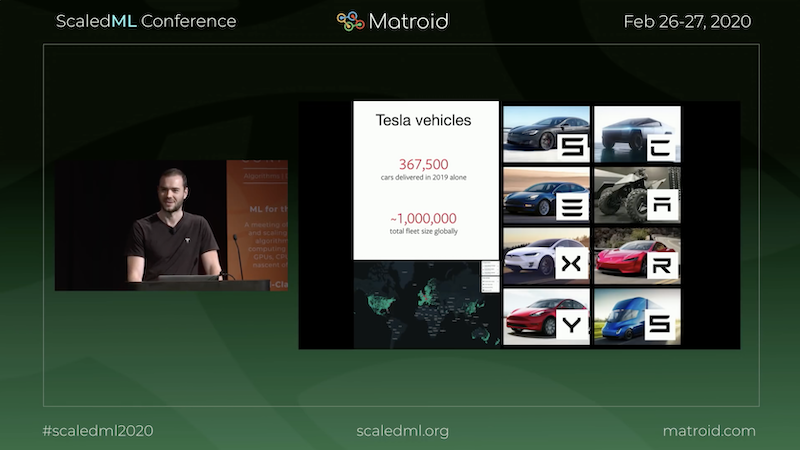

In February this year, Tesla’s AI senior director Andrej Karpathy gave a speech explaining how Tesla uses its vision system to recognize road environments and apply this to autonomous driving.

▲ Video of Andrej Karpathy’s speech

Tesla uses a “shadow mode” where the autonomous driving computer performs real-time calculations while the driver is driving but does not control the vehicle. If there is a discrepancy between the driver’s actions and the machine’s calculations, Tesla’s autonomous driving computer records the case and uploads it to Tesla headquarters. After collecting a large amount of data, Tesla categorizes different scenarios, and through machine learning, the recognition algorithm becomes “smarter”.

When applied to vehicles, Tesla also uses machine learning to compute a three-dimensional scene from two-dimensional images, allowing for accurate judgment of distances to obstacles, thus achieving more precise autonomous driving capabilities.

Tesla’s Ability to Wait at Red Lights Brings Us Closer to City Autonomous Driving

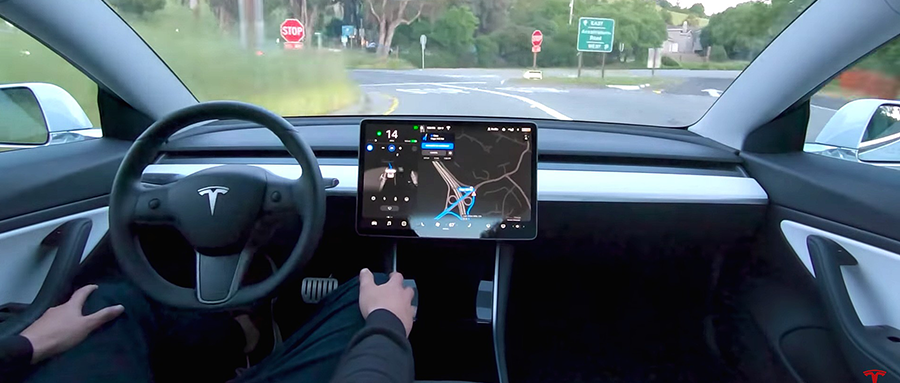

On April 24, Tesla rolled out the 2020.12 software update to users. In this update, Tesla officially introduced the feature of “recognizing traffic lights and stop signs and responding accordingly”.

Tesla owners equipped with FSD can automatically recognize red and green lights and stop signs after updating the software. If there is a red light or stop sign ahead, Tesla will issue a prompt on the vehicle’s screen, informing the driver of the distance to the stop line.

If the driver does not take timely braking measures, the vehicle’s braking system will intervene and gently stop before the stop line. Once the green light is on, the vehicle will automatically accelerate forward. If the driver confirms safety at an intersection where stopping is required and presses the accelerator pedal, the vehicle will also accelerate forward.

▲ Owner testing the Tesla software update

During driving, the vehicle can detect green lights, flashing yellow lights, and red lights ahead and display them on the vehicle’s screen. If a red light or stop sign is detected ahead, the vehicle will remind the driver of the stop line’s location in advance; if the driver does not respond, the vehicle will automatically slow down and stop before the stop line. If the driver confirms safety and wishes to proceed, pressing the accelerator pedal will allow the vehicle to continue to accelerate forward.

However, since this feature has just been released, its operational logic is relatively conservative, and the vehicle may not attempt to pass through intersections autonomously in some cases. As the number of vehicles using this feature increases, Tesla’s autonomous driving computing chip will undergo machine learning, making this feature more refined.

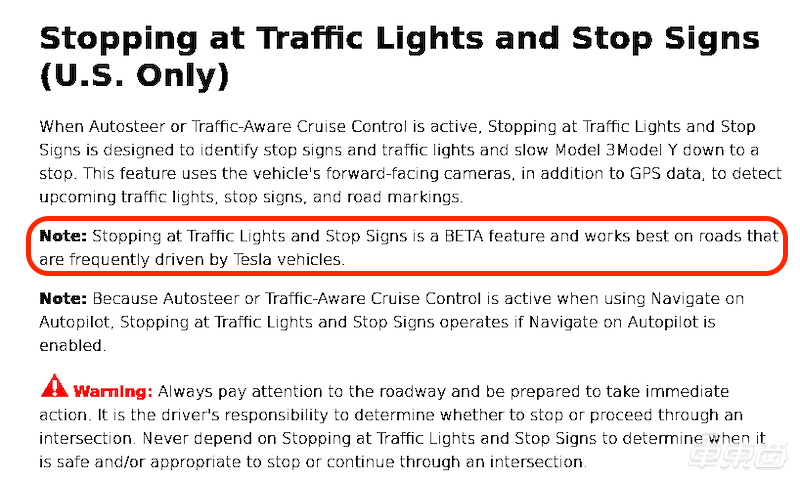

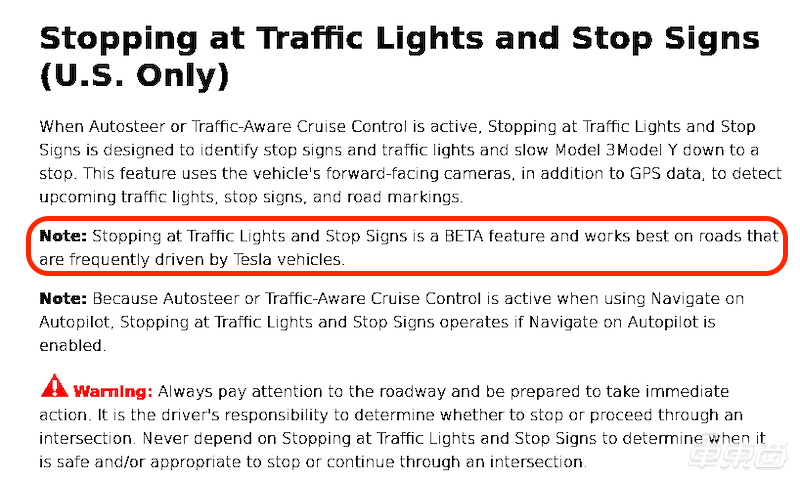

According to a previously released Tesla autonomous driving manual, this feature does have certain limitations. Tesla states that while the vehicle can monitor traffic lights and stop signs ahead, the driving responsibility lies entirely with the driver. The driver must always pay attention to the situation on the roadway and be ready to take emergency measures.

▲ Tesla’s traffic light and stop sign recognition feature manual (US version)

It is worth noting that the user manual states: if the Tesla vehicle travels frequently on roads with traffic lights and stop signs, the recognition accuracy will be higher. This means that Tesla indeed relies on deep learning to enhance the algorithm’s performance, and the more times the same scene is learned, the higher the recognition accuracy.

Moreover, the traffic light and stop sign recognition feature is not available in all scenarios. Tesla states in the manual that in the US, this feature cannot be enabled in environments such as railroad crossings, restricted areas, toll booths, pedestrian crossings, unclear or temporary traffic lights, complex signals, and lane indicators.

This feature update represents a significant advancement for Tesla’s FSD autonomous driving system, bringing Tesla closer to city road autonomous driving.

In February this year, Tesla’s AI senior director Andrej Karpathy revealed the latest progress of the Tesla autonomous driving system during a speech at the ScaledML conference. By utilizing machine learning algorithms, Tesla can accurately identify roadside signal signs and calculate the distance information to stop lines and obstacles.

What Makes Tesla Strong? Millions of Owners Help Tesla Test Software

In Andrej Karpathy’s speech, he first explained how Tesla’s autonomous driving system works. He stated that Tesla primarily relies on its vision system to collect image signals; after calculations by the autonomous driving computer, it can control the vehicle’s speed and steering to achieve autonomous driving. In this process, the calculations of the autonomous driving computer are the most critical part, requiring support from AI algorithms.

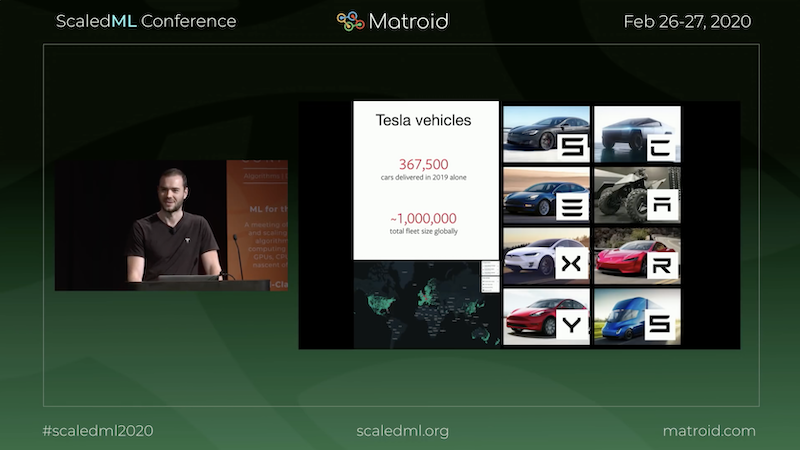

▲ Andrej Karpathy at the speech event

From the autonomous driving roadside data released by the California DMV in February this year, it can be seen that Tesla officially conducted only 12.2 miles (approximately 19.6 kilometers) of autonomous driving road tests throughout 2019. Compared to companies like Baidu, Waymo, and Cruise, which conduct hundreds of thousands to millions of miles of autonomous driving tests each year, it can be said that Tesla has hardly conducted any official testing.

If Tesla does not conduct a large number of autonomous driving tests, how can it improve? The answer is that Tesla’s testers are the millions of Tesla owners, relying on the “shadow mode” for autonomous driving testing.

In 2016, Tesla released the “shadow mode”; during the driver’s operation, Tesla equipped with HW1 and updated autonomous driving computers can perform real-time autonomous driving calculations without controlling the vehicle’s direction and speed. If there is a significant discrepancy between the driver’s actions and the autonomous driving computer’s actions, the autonomous driving computer automatically records this case and uploads it to Tesla.

Since its formal implementation in 2018, shadow mode has processed over 3 billion miles (approximately 4.83 billion kilometers) of driving mileage. Every day, Tesla’s autonomous driving R&D team receives a large number of cases: the driver stops, but the autonomous driving computer continues; the driver slightly adjusts the direction, but the autonomous driving computer continues straight; of course, there are also cases where drivers collide, but the autonomous driving computer avoids danger.

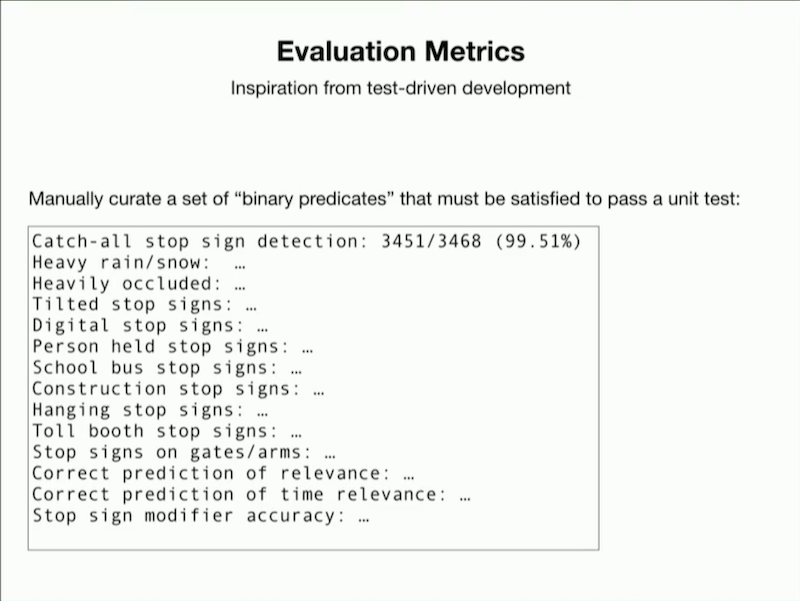

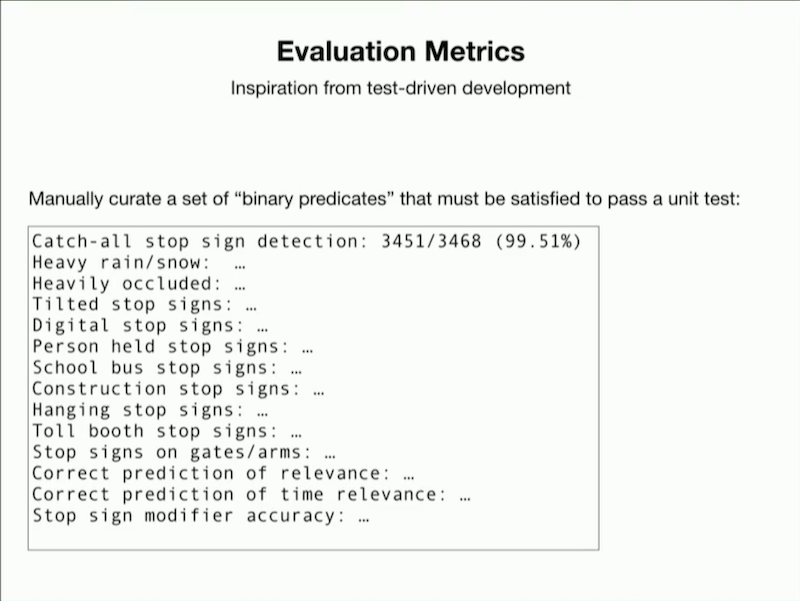

For example, not all stop signs are clearly visible; some are obscured by leaves, some are temporary signs, some are very blurry at night, and some stop signs require stopping while turning left but not when turning right… These unclear signs can interfere with the autonomous driving computer, and if misidentified, could lead to driving accidents.

▲ Top image: unclear stop sign; bottom image: conditional stop sign

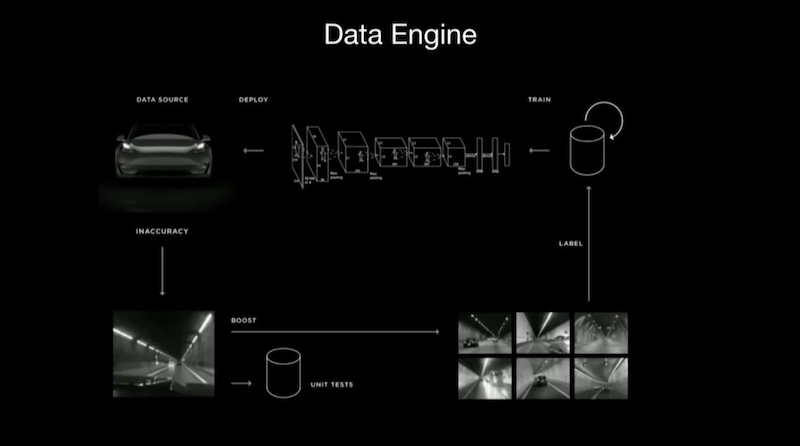

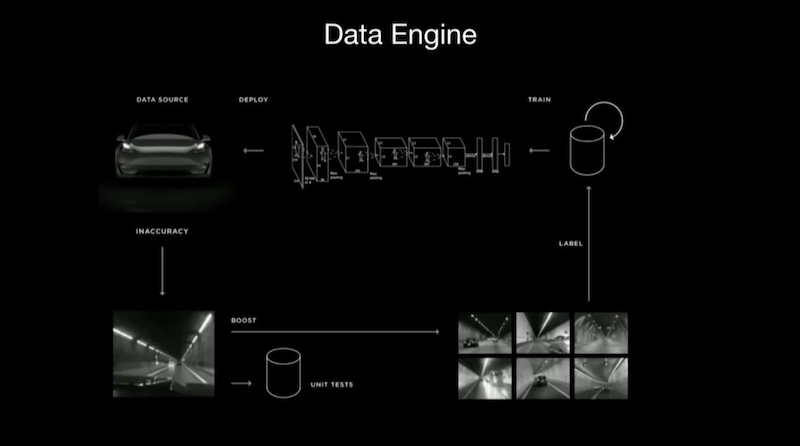

To solve this problem, Tesla utilized a data engine to train its algorithms. First, the vehicle collects data and sends it back to Tesla’s autonomous driving R&D department, where Tesla categorizes similar scenarios and conducts machine learning training for the same type, making Tesla’s algorithms more powerful and “smarter”.

▲ Tesla’s “data engine” machine learning model

After training the algorithms, Tesla continuously monitors the recognition accuracy of similar scenarios by the autonomous driving computer, forming the following table.

From this, it can be seen that Tesla has identified at least 14 possible scenarios for the Stop Sign, including signs under heavy rain or snow, obscured signs, signs on school buses, and even signs on doors and handheld signs.

This means that Tesla’s goal is to recognize signs under various special conditions.

▲ Accuracy evaluation of the same sign in different scenarios

Returning to the main topic, after training the model, Tesla tests the algorithms through shadow mode, re-evaluating the recognition accuracy of each scenario. An increase in accuracy means that the algorithm is gradually improving. Once the recognition accuracy reaches a high level, Tesla can consider updating the function for all vehicles, increasing autonomous driving capabilities and use cases.

Here’s an interesting detail.

Tesla previously pushed an update for recognizing traffic cones, and a user conducted an extreme test to understand which traffic cones Tesla could recognize.

This test involved not only traffic cones of different sizes and heights but also a human dressed as a traffic cone as a prop. From the video, it can be seen that as long as the human in the traffic cone outfit is moving or standing, Tesla can recognize this as a person. However, if the person squats down and remains still, they will be recognized as a traffic cone.

▲ Foreign netizen dressed as a traffic cone, but did not fool Tesla

This also indicates that Tesla considers many special cases in recognizing traffic cones, which contributes to their performance.

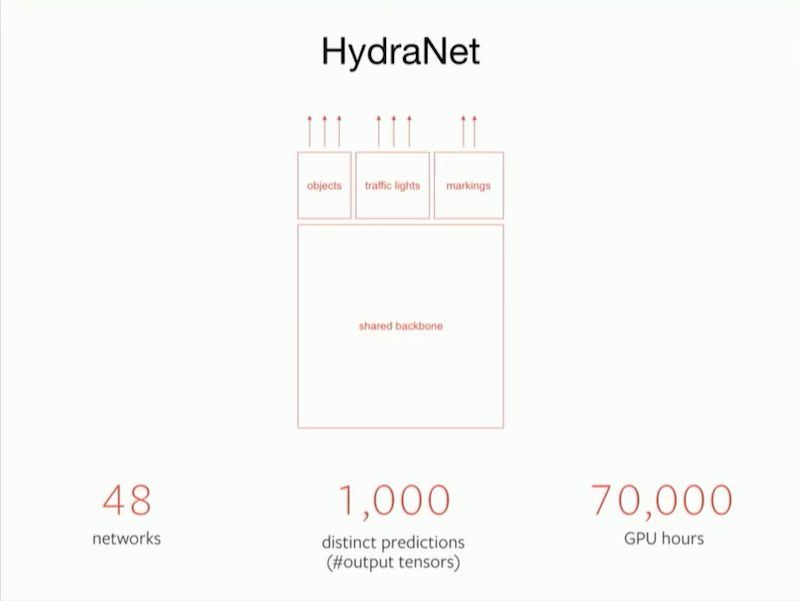

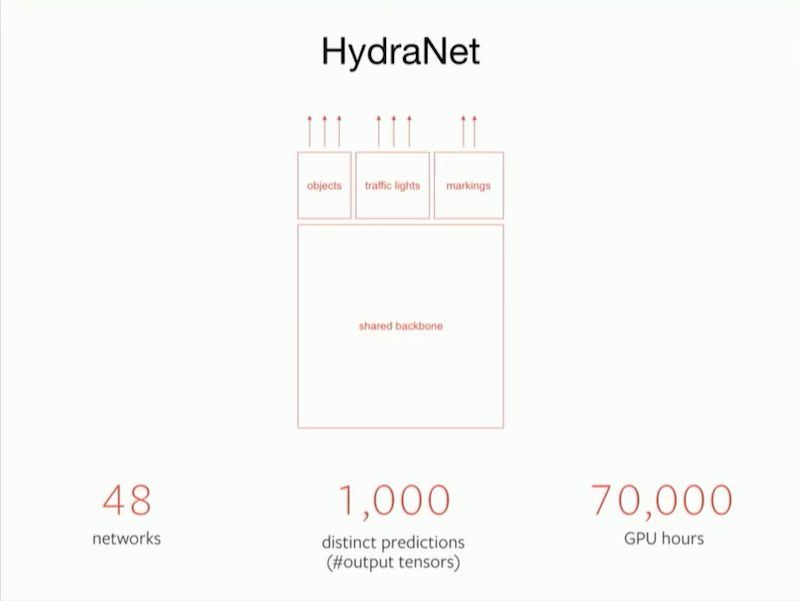

From the algorithm’s code perspective, Tesla refers to its deep learning network as HydraNet. The base algorithm code is shared, and the entire HydraNet consists of 48 different neural networks, allowing it to output 1000 different prediction tensors. Theoretically, Tesla’s super network can simultaneously detect 1000 different objects. Completing these calculations is not simple; Tesla has already spent 70,000 GPU hours training deep learning models.

▲ Tesla’s HydraNet network

Although the workload is enormous, most of the work is handled by machines, and Tesla’s AI team consists of only a few dozen people, which is indeed small compared to the hundreds or even thousands of employees at other autonomous driving companies.

2D Images Turn into 3D: Algorithms Can Also Modify Their Own Code

After extensive machine learning training, Tesla’s algorithms gradually mature, and this set of algorithms will be distributed to every Tesla car through OTA upgrades. During the actual driving process, further trial and error can refine the algorithms.

The ultimate goal of developing this algorithm is to achieve Tesla’s autonomous driving capability, which ultimately requires the cooperation of the hardware on the vehicle.

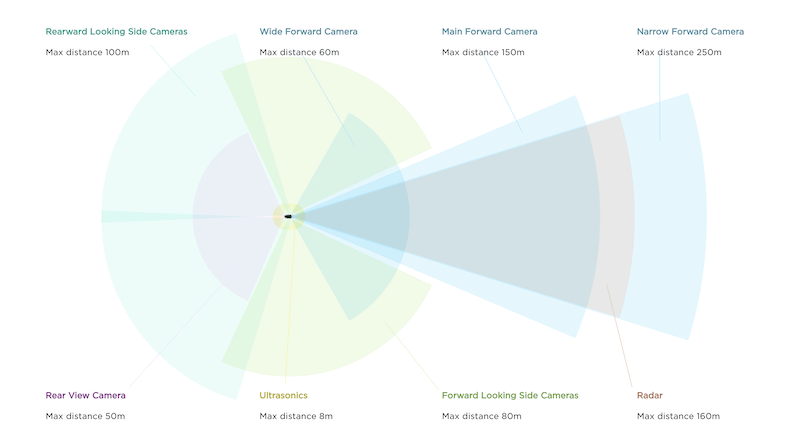

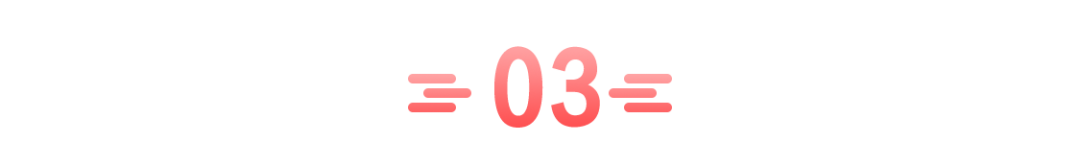

Tesla vehicles are equipped with 8 cameras, one millimeter-wave radar, and 12 ultrasonic radars to monitor the external environment and transmit information to the autonomous driving computer in real-time.

▲ External sensors of Tesla

In simple terms, Tesla’s cameras, millimeter-wave radar, ultrasonic radar, and inertial measurement units record the current environmental data of the vehicle and send this data to Tesla’s autonomous driving computer. After calculating the algorithms, the autonomous driving computer transmits speed and direction information to the steering wheel and the acceleration and braking pedals, achieving control of the vehicle.

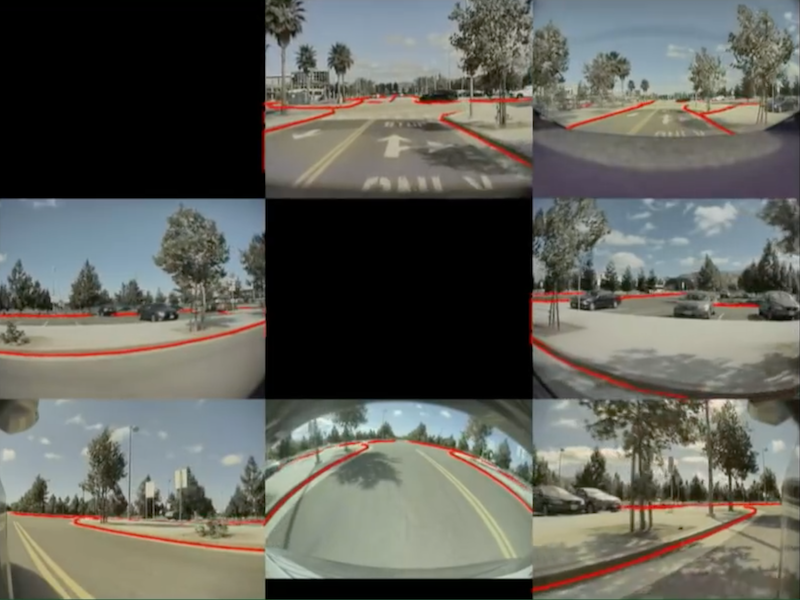

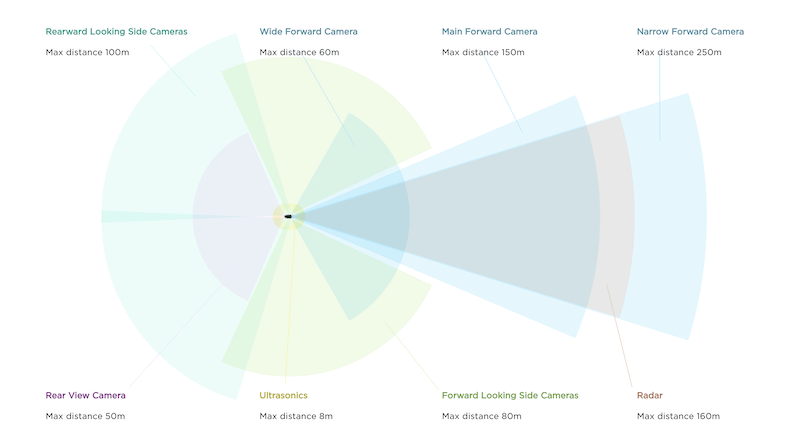

However, during regular driving, the content captured by the cameras as sensors is two-dimensional images and does not contain depth information.

▲ The images captured by Tesla’s cameras can determine boundaries but do not contain depth information

This means that although two-dimensional images can distinguish between the road and the sidewalk, they do not know how far the vehicle is from the “curb.” Due to the lack of such crucial information, autonomous driving calculations may not be accurate, leading to operational errors. Therefore, capturing or establishing a three-dimensional view is necessary.

Traditional engineers believe that simply installing a three-dimensional camera on the roof can solve this problem. However, this increases manufacturing costs and affects the vehicle’s aesthetics. Additionally, due to the large area of the roof, if the height of the three-dimensional camera is insufficient, there will be a significant blind spot.

Tesla’s engineers thought of solving this problem through algorithms. If an algorithm can align the temporal sequences and edges of two-dimensional images to project a three-dimensional view, the problem will be resolved.

▲ The “bird’s eye view” derived from the algorithm

After calculating the three-dimensional view, Tesla can even compute the vehicle’s “bird’s eye view.” This means that although there are no cameras above the vehicle, the calculations can simulate the view from above the vehicle. This way, the distance to obstacles can be visually displayed inside the vehicle.

▲ The predicted edge of the road and lane lines by Tesla’s vision system

In fact, Tesla has an even more powerful capability: the algorithm can predict the depth information of every pixel in a streaming video. This means that as long as the algorithm is good enough and the streaming video is clearer, the depth information captured by Tesla’s visual sensors can even exceed that of LiDAR.

▲ Tesla collects visual information (top), predicts the depth information of every pixel (middle), and projects to form a bird’s eye view (bottom)

In actual autonomous driving applications, the parking and smart summon scenarios can fully utilize this algorithm. When driving in a parking lot, the distance between vehicles is very small, and even for drivers, a momentary lapse in attention can easily result in scratches. For machines, driving in parking lot scenarios is even more challenging. After predicting the depth information, the vehicle can quickly recognize its surroundings with the assistance of ultrasonic radar, making parking much smoother.

After predicting the depth information, this information will be displayed on the vehicle’s screen and will also directly participate in controlling steering, acceleration, and braking actions. However, driving strategies for steering, acceleration, and braking do not have fixed rules and have a degree of flexibility.

Therefore, the autonomous driving strategy has no best, only better.

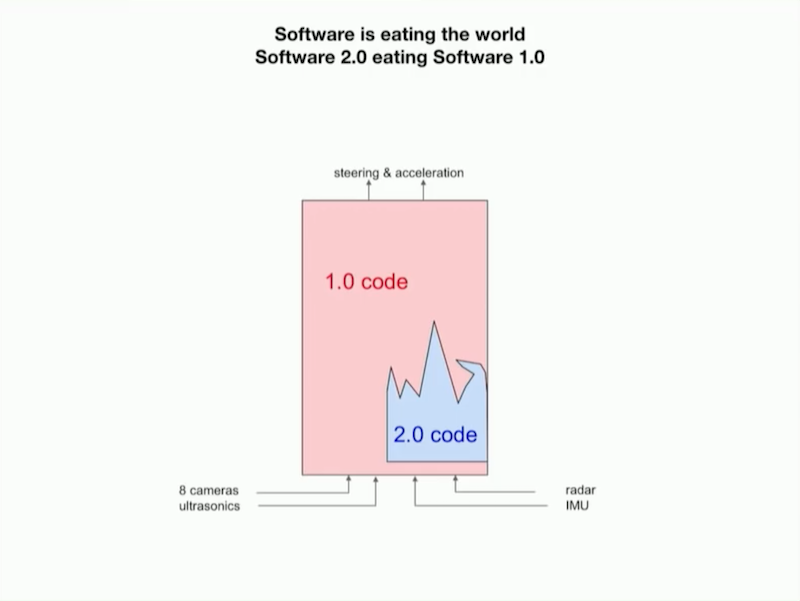

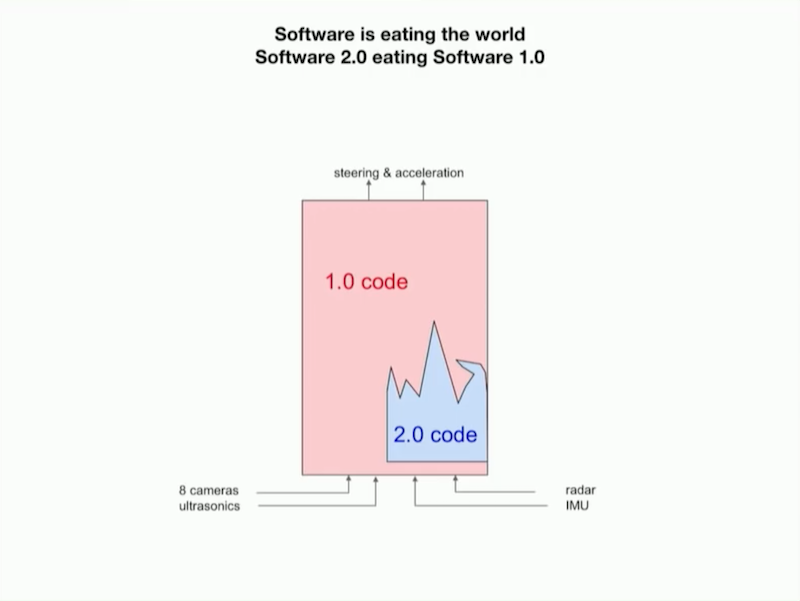

Tesla’s basic autonomous driving strategy is developed by engineers, who have written a large amount of code, which is equivalent to the 1.0 version of the driving strategy code. However, due to the complexity of actual road conditions, the coverage of the 1.0 version of the driving strategy code is relatively small, and the logic is inevitably prone to errors. As time passes, it is necessary to continuously upgrade the driving strategy.

Andrej Karpathy stated that if the strategy code is continuously upgraded within the machine learning network, it saves labor costs and significantly accelerates the improvement of autonomous driving capabilities.

During the driver’s operation, the vehicle also collects the driver’s driving habits. By learning from the driving habits of millions of Tesla owners, Tesla’s autonomous driving strategy will continuously improve.

By learning from the driving habits of millions of owners, the machine can compile the 2.0 version of the autonomous driving strategy code.

▲ The machine-compiled 2.0 version code is gradually replacing the 1.0 version code

Andrej Karpathy predicts that as the machine’s compilation ability improves and the data collected becomes richer, the 2.0 version of the code will gradually cover the 1.0 version of the code, ultimately achieving all code being compiled by machines, making the autonomous driving strategy more precise.

Conclusion: The Autonomous Driving Race is Transforming into an Algorithm Race

As autonomous driving continues to develop, Tesla has formed its own faction, achieving autonomous driving entirely through visual recognition. The technology has gradually shifted from stacking cameras, millimeter-wave radar, ultrasonic radar, and even LiDAR to competition between algorithms.

Tesla utilizes millions of vehicles driving on the roads daily for autonomous driving calculations, and its data sources and accuracy likely far exceed those of other autonomous driving testing companies. In the future, the competition of algorithms will gradually expand, and the competition in the autonomous driving market will become even more intense.