This is a simple record and also a solution to some AI configuration issues in SiYuan Note.

This article mainly discusses how to use the local Ollama model for artificial intelligence in SiYuan Note. The main plugin used is the ‘Publishing Tool’ in SiYuan Note (surprising, right?).

1. Why

-

First, why do this?

First, it’s because the neighboring ob has long supported large models, and various summary documents and other functions are really convenient. However, SiYuan seems to only support ChatGPT on the surface.

Second, artificial intelligence is hard to get for free. Many plugins are excellent, but they tend to fix the AI API calls, such as Tomato Toolbox, which supports AI but only calls APIs from Baidu, DeepSeek, etc. For those who want to use it offline or want to get it for free, it’s really hard to resist.

Third, many plugins directly read the AI settings provided by SiYuan Note, but the AI settings in SiYuan Note seem confusing, and it’s unclear how to set them up.

-

Next, where are the limitations?

Although this article discusses how SiYuan Note calls AI, there are fewer developers compared to ob, so the plugins used are more aligned with the developers’ preferences and won’t be customized based on your preferences and habits.

In summary, it’s currently possible to try it out and use it, but whether it’s useful and can generate productivity varies from person to person.

2. How to Do It

-

Open SiYuan Note, search for the Publishing Tool in the marketplace, and install it.

-

Install Ollama locally; refer to previous articles for the installation method. Download the model in Ollama, this article uses the ‘Qwen 2.5:latest’ model as an example.

-

Open the settings in SiYuan Note – click on the AI section, and set as follows:

API Provider: Choose OpenAI, here OpenAI refers to the OpenAI protocol, not the OpenAI company; this misled me for a long time.

Timeout: No need to change, keep it at 5, meaning if there’s no response in 5 seconds, it will indicate a failure.

Max Token Count: This tests the context capability of the model; generally, unless there are special requirements, 4096 is sufficient

Temperature: The temperature parameter refers to the model’s creativity; generally, it can be set between 0.2~0.8, the higher the temperature, the stronger the creativity, but this also increases the chances of nonsensical outputs. For specifics, refer to previous articles on custom models in Ollama.

Max Context Count: This indicates how many rounds of dialogue can be supported and how many dialogue instances can be remembered; unless there are special needs, it can be kept at 7.

Model: Input the name of the local Ollama model, for example, I used the ‘Qwen 2.5:latest’ 7B model, so input qwen2.5

API Key: This was the part that confused me the most; it’s actually very easy to set, just input ollama.

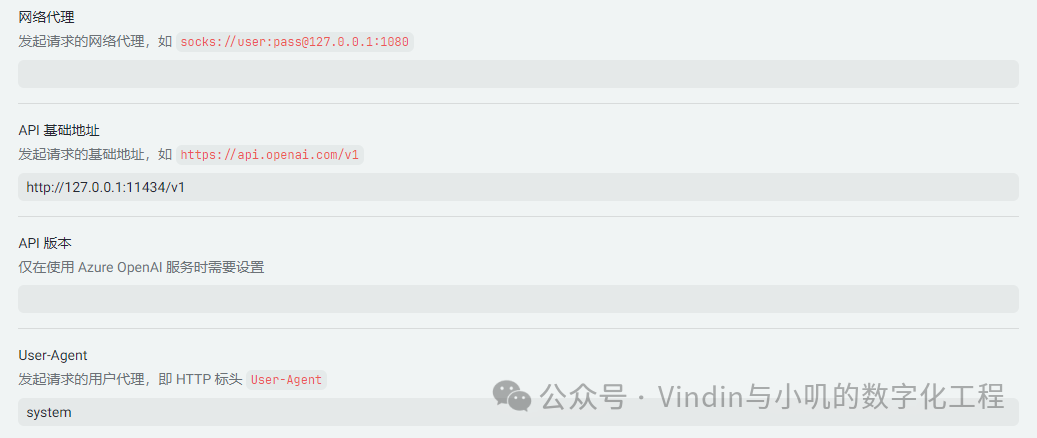

Network Proxy: SOCKS proxy, leave blank.

API Base Address: Input the API address for Ollama, adding ‘v1’ as the final address:http://127.0.0.1/v1

API Version: leave blank, using the OpenAI protocol.

User-Agent: This sets user permissions; you can use system as the system directive, or user as the user directive; if unsure, you can directly use user.

-

After setting up, test the effect.

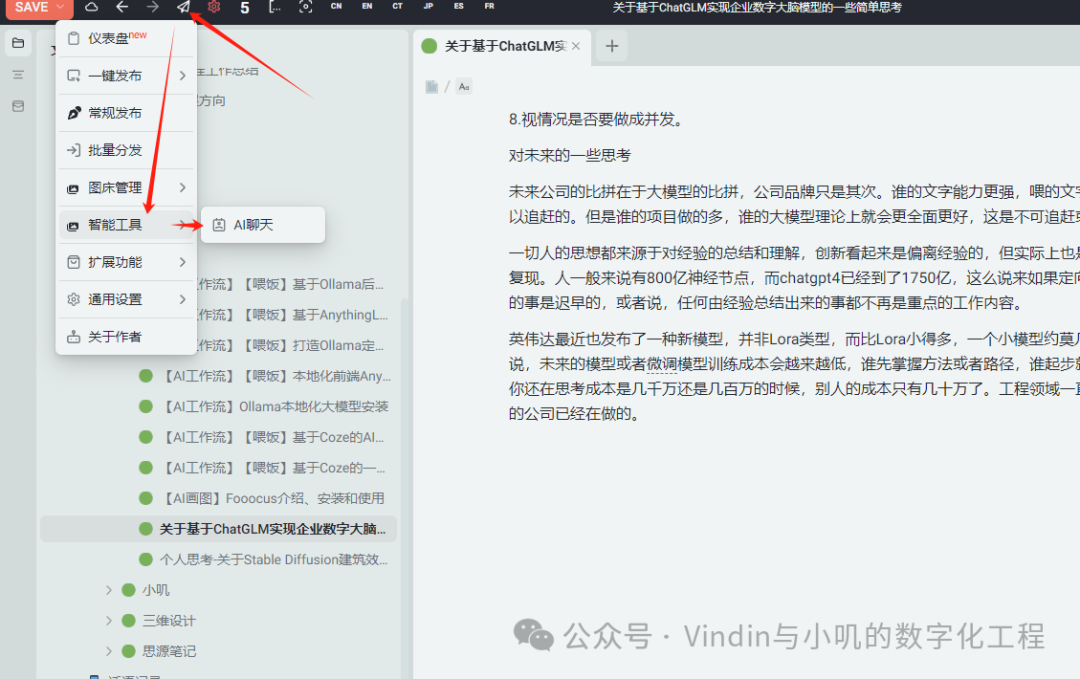

Open a note or an article you’ve copied, click on the publishing tool, select intelligent tools, and choose AI chat.

-

In the new window, first confirm if the green text is from the article itself, then input your question and click send; you will receive the result below.

Note that the text here is not streamed output, so when the question is complex, it may take a long time and then output all at once.

3. Conclusion

From this AI tool’s perspective, there are many areas for improvement, but to be fair, the response quality is quite good, and the output is in md format, making it highly copyable.

Correspondingly, some AI functions in SiYuan Note have also been unlocked; for example, clicking on the content before a text block allows for ‘continuation’ and so on, but the results are still not quite satisfactory. A common saying applies here:Low emotional intelligence: This is not enough; High emotional intelligence: There is a lot of room for improvement..