Microsoft recently released its latest language model Phi-4, which has been open-sourced on Hugging Face, attracting widespread attention.

Microsoft recently released its latest language model Phi-4, which has been open-sourced on Hugging Face, attracting widespread attention.

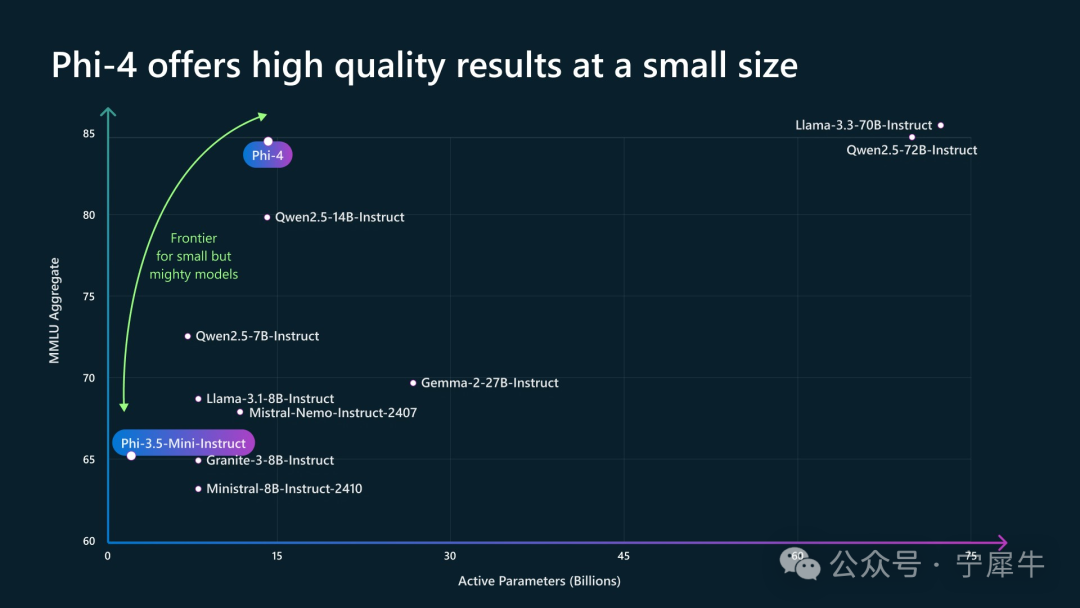

Although Phi-4 is smaller in scale, it is powerful and outperforms larger competitors in reasoning tasks.

Overview of the Phi-4 Model

Phi-4 is a small language model (SLM) developed by Microsoft Research with 14 billion parameters, focusing on complex reasoning, particularly excelling in mathematical reasoning tasks while also capable of traditional natural language processing tasks.

Phi-4 is a small language model (SLM) developed by Microsoft Research with 14 billion parameters, focusing on complex reasoning, particularly excelling in mathematical reasoning tasks while also capable of traditional natural language processing tasks.

Phi-4 is based on the Transformer architecture and employs a dense decoder model. Compared to other large language models (LLMs), Phi-4 is smaller, requiring less computational resources and energy, making it easier for small and medium enterprises and researchers to use.

Phi-4 was released in December 2024 and is available on Azure AI Foundry and Hugging Face. It is released under a permissive MIT license, allowing developers, researchers, and enterprises to use and modify it widely, which is significant for the popularization of AI innovation.

The context length of Phi-4 is 16K tokens, meaning it can handle longer text inputs.

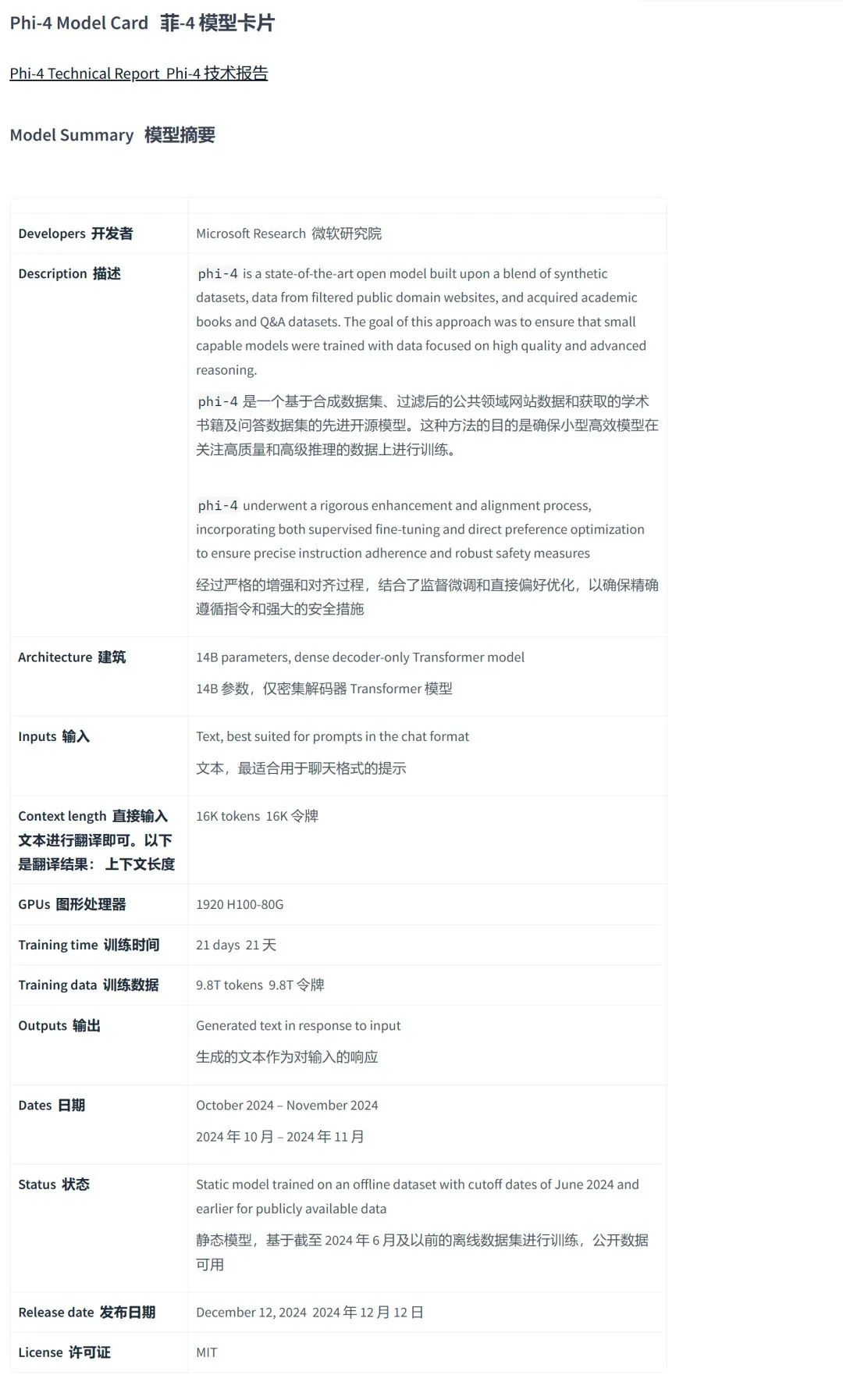

The training process for Phi-4 lasted 21 days, using 9.8T tokens of training data and was trained on 1920 H100-80G GPUs. The training data consists mainly of the following three parts:

|

|

|

|---|---|

|

|

|

|

|

|

|

|

|

Phi-4 challenges the traditional notion that bigger models are better. Its compact design reduces computational and energy costs, making advanced AI capabilities more accessible to small organizations and researchers, promoting a more inclusive AI ecosystem.

Additionally, Phi-4 is a static model trained on an offline dataset with a cutoff date of June 2024. This means the model’s knowledge base is fixed and will not be updated over time.

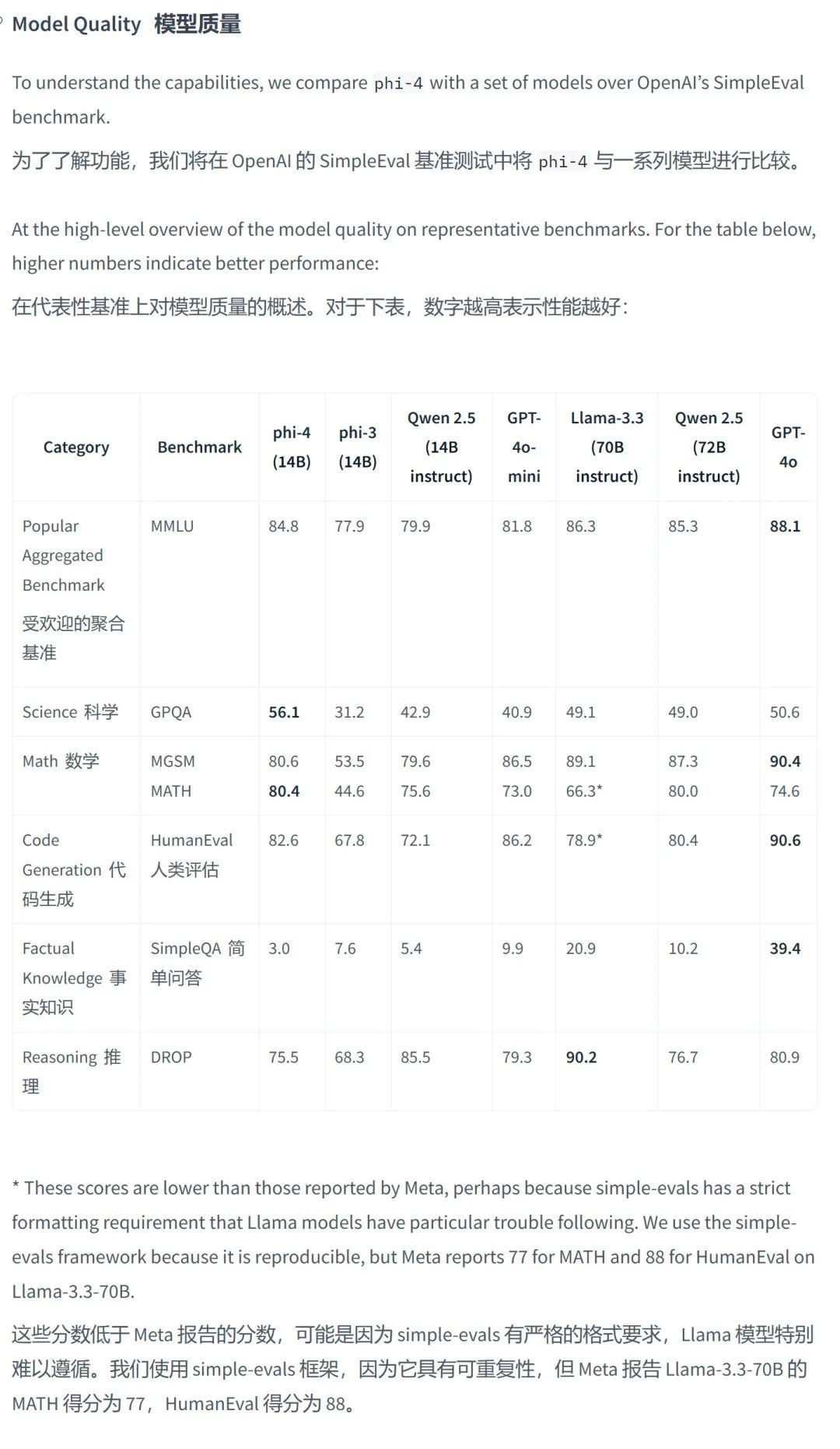

Performance of the Phi-4 Model

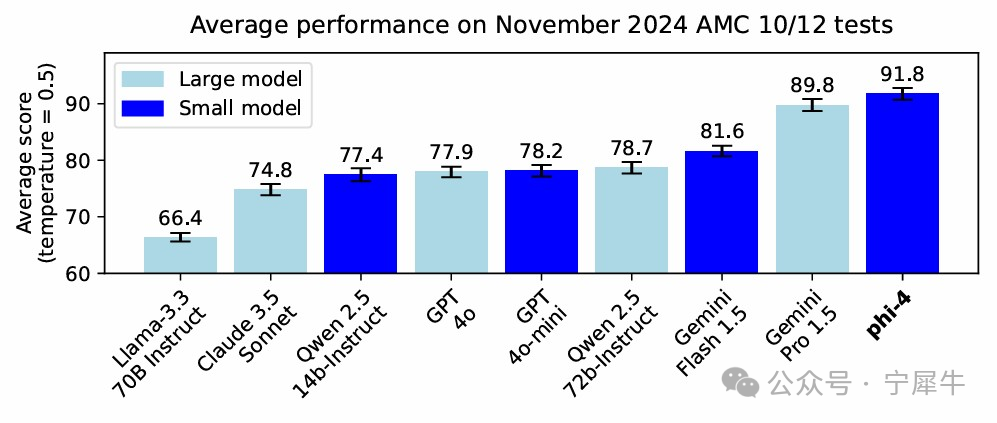

Phi-4 has demonstrated outstanding performance across multiple benchmark tests, especially in mathematical reasoning.

Phi-4 has demonstrated outstanding performance across multiple benchmark tests, especially in mathematical reasoning.

For instance, in mathematical competition problem tests, Phi-4 outperformed many larger models, including Gemini Pro 1.5.

It excelled in solving problems from the American Mathematics Competitions (AMC), indicating its strong capabilities in mathematical reasoning.

Phi-4 outperformed its peers in 9 out of 12 different benchmark tests, and in 11 out of 14 benchmark tests, it surpassed its predecessor Phi-3. More importantly, Phi-4 also performed well on entirely new test sets, indicating that its top performance on MATH benchmark tests is not due to overfitting or data contamination.

The excellent performance of Phi-4 can be attributed to several factors:

- High-Quality Training Data:

Phi-4 utilized high-quality synthetic datasets, filtered public domain website data, and academic books and Q&A datasets, providing the model with rich knowledge and reasoning capabilities. - Innovative Training Techniques:

The training process of Phi-4 combined synthetic datasets with curated organic data, an innovative approach that addresses challenges related to data availability and may lay the groundwork for future model development. Phi-4 employed various innovative training techniques such as multi-agent prompting, self-correcting workflows, and instruction reversal, which build more effective training datasets and enhance the model’s reasoning and problem-solving capabilities. - Post-Training Optimization:

Phi-4 underwent a rigorous enhancement and calibration process, including supervised fine-tuning and direct preference optimization, ensuring that the model accurately follows instructions and possesses robust safety measures. Additionally, synthetic data played a critical role after training, with rejection sampling and a new direct preference optimization (DPO) method used to improve the model’s output. - Safety and Robustness:

Phi-4 integrates Azure AI’s content safety tools, incorporating mechanisms such as prompt masking and protected material detection to reduce risks associated with adversarial prompts, making it safer for deployment in real-world environments. Phi-4 effectively handles adversarial prompt attacks, enhancing its safety and robustness in practical applications.

Applications of the Phi-4 Model

With its strong reasoning capabilities and smaller scale, Phi-4 is suitable for various application scenarios:

- Chatbots:

Phi-4 can be used to build responsive and context-aware conversational agents. - Education:

Phi-4 can be utilized to create AI tutors capable of solving math problems or explaining concepts. - Code Generation:

Phi-4 can handle technical prompts, simplifying programming tasks. - Research Tools:

Phi-4 can enhance data analysis capabilities, providing advanced reasoning and natural language processing functions. - Text Generation:

Phi-4 can generate various types of text content, such as poetry, code, scripts, musical works, emails, letters, etc., offering new possibilities for creative writing and content creation.

Phi-4 also shows great potential in practical applications:

- Healthcare:

Phi-4 can be used to build healthcare systems that streamline administrative tasks, analyze patient data, and even assist in diagnosis through natural language processing. It can facilitate advancements in the healthcare industry, providing more streamlined and precise computational tools that lead to life-changing benefits. - E-commerce:

Phi-4 can create personalized shopping experiences, such as AI-driven product recommendations and virtual customer support agents.

Limitations of the Phi-4 Model

Despite significant advancements, Phi-4 also has some limitations:

- Factual Hallucination:

Although mitigated through targeted training, studies show that Phi-4 may still experience factual hallucinations, especially when handling less common knowledge. - Instruction Following:

Reports indicate that Phi-4 may have limitations in following complex instructions, such as generating strict table formats, adhering to predefined bullet structures, or precisely matching style constraints. This may be due to the model’s training focus on synthetic datasets for Q&A and reasoning tasks rather than instruction-following scenarios. - Reasoning Errors:

Even in reasoning tasks, Phi-4 may make errors. - Code Generation:

Phi-4’s training data is primarily based on Python and utilizes common packages such as typing, math, random, collections, datetime, and itertools. Users are strongly advised to manually verify all API usages if the Python scripts generated by the model use other packages or scripts from other languages.

Future Development Directions for the Phi-4 Model

Microsoft plans to expand the capabilities of Phi-4 through updates, with future development directions including:

- Real-Time Collaboration:

Enhancing AI support for group projects and real-time collaboration. - Multimodal Capabilities:

Extending Phi-4’s capabilities to other modalities such as images and videos. - Continuous Learning:

Enabling Phi-4 to continuously learn and adapt to new information and tasks.

Conclusion

Phi-4 is a significant breakthrough in the realm of small language models, demonstrating that the size and performance of models are not always positively correlated. With its strong reasoning capabilities, smaller scale, and open-source nature, Phi-4 opens new possibilities for the popularization and application of AI technology. Phi-4 focuses on reasoning, especially mathematical reasoning, distinguishing it from other large language models that typically emphasize general language understanding and generation capabilities.

The miniaturization and efficiency of Phi-4 also make it an ideal choice for resource-constrained environments and latency-sensitive applications.

The open-source availability of Phi-4 and its permissive MIT license carry broader implications. This not only fosters innovation in the AI field but also transforms the way AI technologies are developed and shared, making them more inclusive and collaborative. By lowering the barriers to entry, Phi-4 enables more researchers, developers, and enterprises to participate in AI innovation and drive advancements in AI technology.

Despite some limitations, Microsoft is actively exploring future development directions for Phi-4 and plans to expand its capabilities through continuous updates. As generative AI technology continues to evolve, Phi-4 is expected to play a significant role in more domains and may influence future trends in AI model development, particularly regarding model size and efficiency.

Elon Musk’s New Move: Ad Astra, a “Non-Traditional” Future School without Grades or Exams

Day of AI: How MIT’s Global AI Enlightenment Became a Worldwide Educational Movement?

Anthropic: Agents Aren’t That Complicated! Just 8 Paradigms

Top Performance? Testing the DeepSeek-V3 Model, Occasionally Outputting Confusion and Inconsistent Performance

Does AI Understand “Division of Labor”? Understanding Mixed Expert Models MOE in One Article