Aitrainee | Official Account: AI Trainee

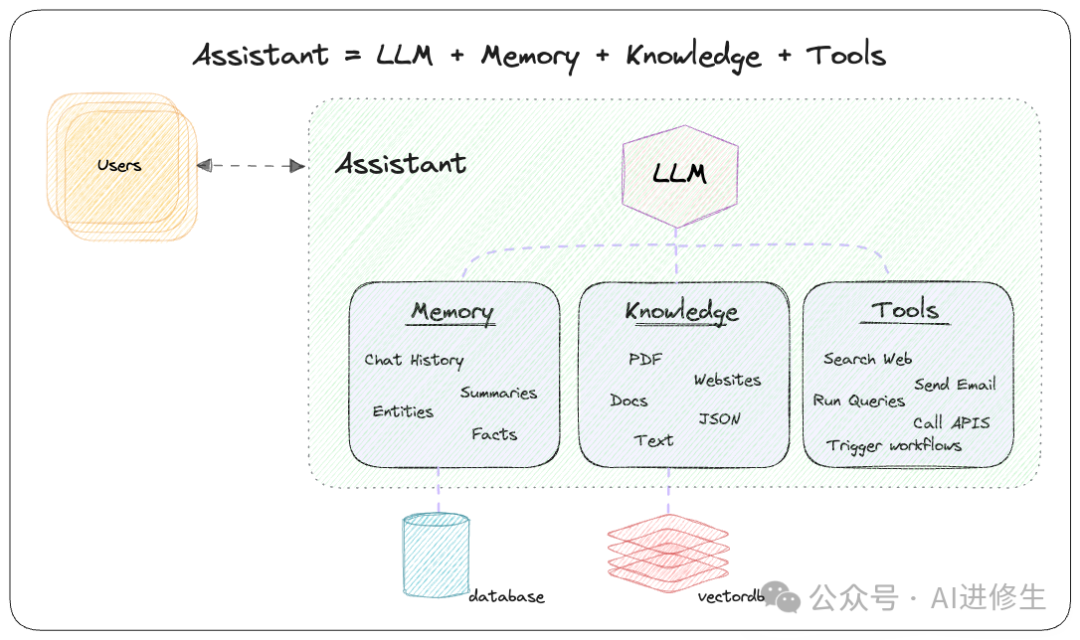

🌟Phidata adds memory, knowledge, and tools to LLMs.

⭐️ Phidata:https://git.new/phidata

Phidata is a framework for building autonomous assistants (also known as agents) that have long-term memory, contextual knowledge, and can perform actions through function calls.

Recommended a tutorial video from YouTuber WorldofAl:

Why Choose Phidata?

Why Choose Phidata?

Problem: Large Language Models (LLMs) have limited context and cannot perform actions.

Solution: Add memory, knowledge, and tools.

-

• Memory: Store chat history in a database to enable long-term conversations for LLMs.

-

• Knowledge: Store information in a vector database to provide LLMs with business context.

-

• Tools: Enable LLMs to perform actions, such as pulling data from APIs, sending emails, or querying databases.

-

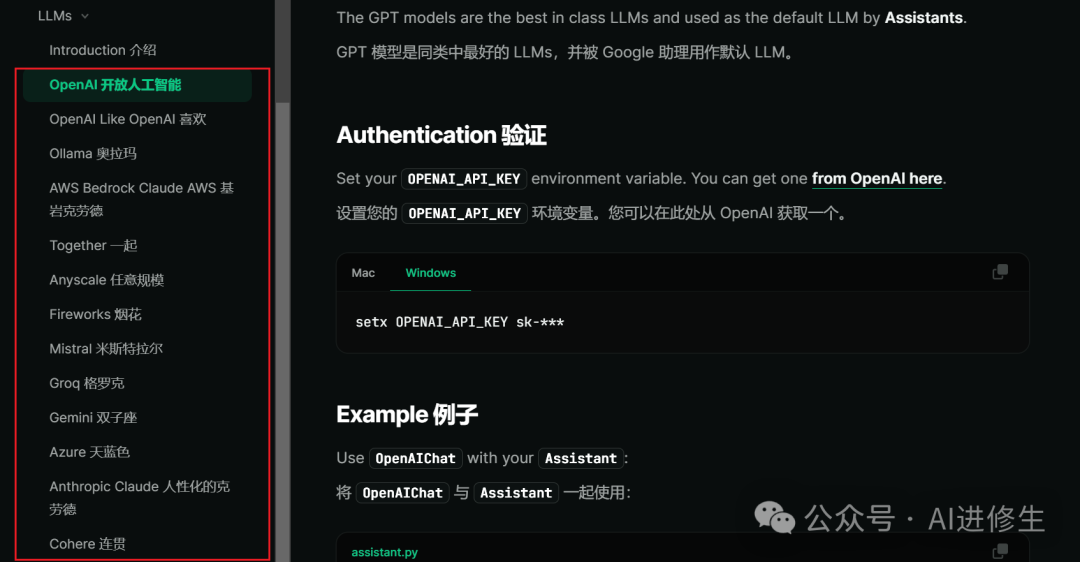

• Supported Large Models: Supports numerous mainstream LLM providers

-

▲ Supports numerous mainstream LLM providers

How Do I Start Using This Project?

How Do I Start Using This Project?

-

• Step 1: Create an

Assistant -

• Step 2: Add tools (functions), knowledge (vectordb), and storage (database)

-

• Step 3: Provide services using Streamlit, FastApi, or Django to build your AI application

How to Create an Assistant

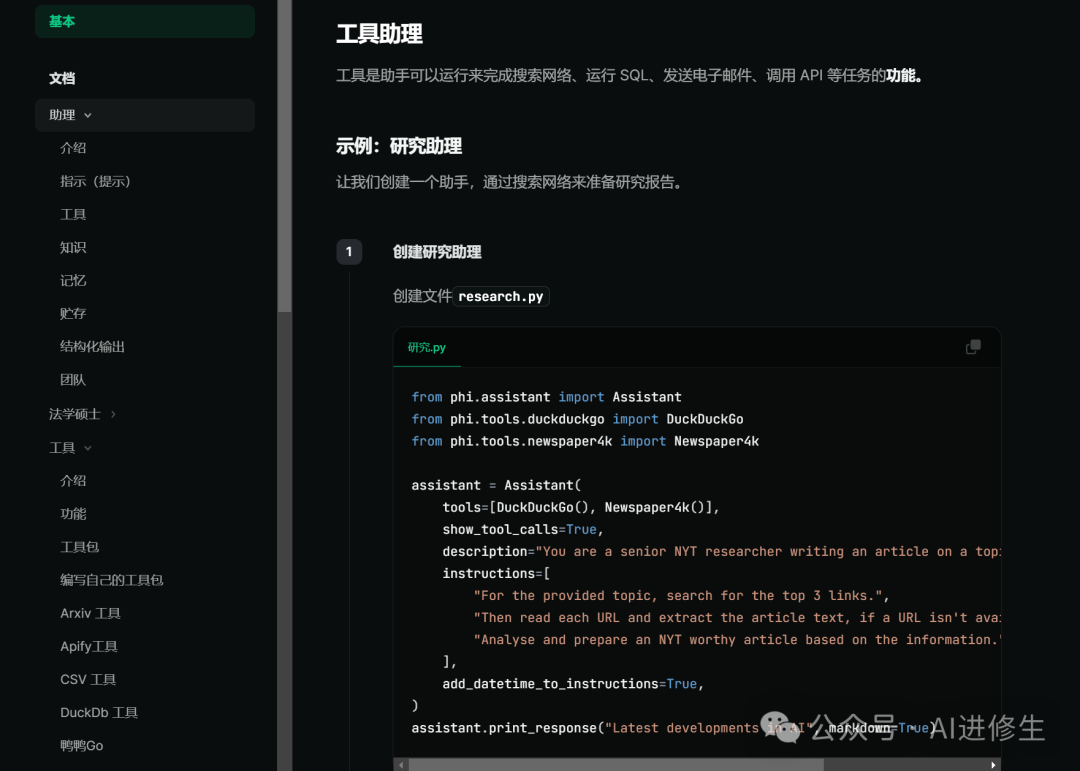

Tools are functions that the assistant can run to perform tasks such as searching the web, running SQL, sending emails, calling APIs, etc.

How to Create a Knowledge Base

The knowledge base is a database of information that the assistant can search to improve its responses. This information is stored in a vector database and provides contextual knowledge to LLMs, enabling them to respond in a context-aware manner.

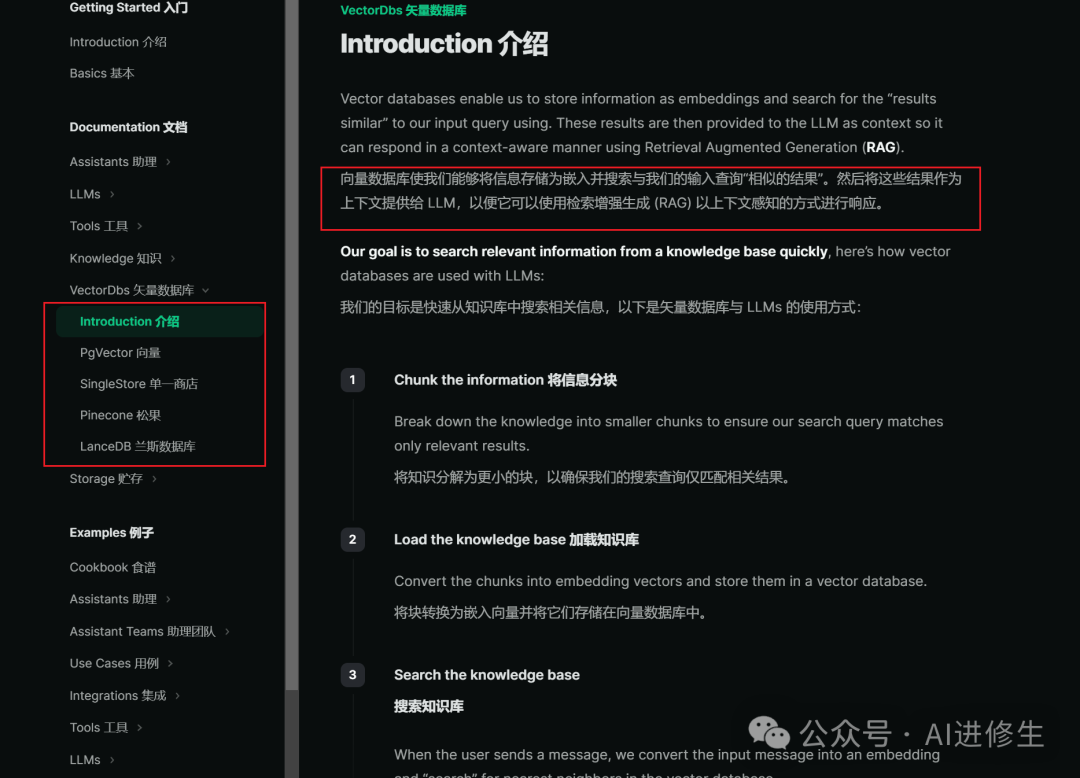

How to Create a Vector Database

A vector database allows us to store information as embeddings and search for “similar results” to our input queries. These results are then provided as context to the LLM so it can respond in a context-aware manner using Retrieval-Augmented Generation (RAG).

Official Example Using the Above Three Steps

The assistant demonstrates how to use LLMs for function calls. This assistant can access a function get_top_hackernews_stories that it can call to get the top stories from Hacker News.

Below are the officialdocumentation introductions, related resources, and deployment tutorials to further support your actions to enhance the effectiveness of this article.

Installation

pip install -U phidataQuick Start: Web-Searching Assistant

Create a file assistant.py

from phi.assistant import Assistant

from phi.tools.duckduckgo import DuckDuckGo

assistant = Assistant(tools=[DuckDuckGo()], show_tool_calls=True)

assistant.print_response("What is happening in France?", markdown=True)Install the library, export your OPENAI_API_KEY, and run Assistant

pip install openai duckduckgo-search

export OPENAI_API_KEY=sk-xxxx

python assistant.pyDocumentation and Support

-

• Read the documentation: docs.phidata.com

-

• Chat with us on Discord

Examples

-

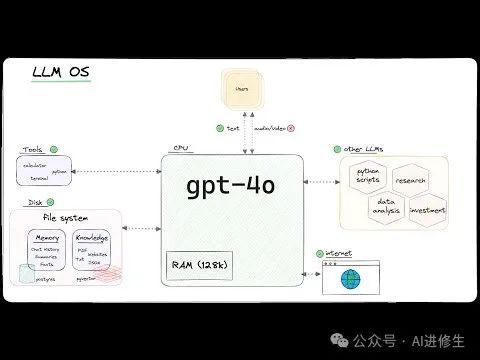

• LLM OS: Using LLMs as the CPU of an emerging operating system.

-

• Autonomous RAG: Providing tools for LLMs to search their knowledge, web, or chat history.

-

• Local RAG: Fully local RAG using Ollama and PgVector.

-

• Investment Researcher: Generating stock investment reports using Llama3 and Groq.

-

• News Articles: Writing news articles using Llama3 and Groq.

-

• Video Summaries: Summarizing YouTube videos using Llama3 and Groq.

-

• Research Assistant: Writing research reports using Llama3 and Groq.

Assistant that Can Write and Run Python Code

PythonAssistant can complete tasks by writing and running Python code.

-

• Create a file

python_assistant.py

from phi.assistant.python import PythonAssistant

from phi.file.local.csv import CsvFile

python_assistant = PythonAssistant(

files=[

CsvFile(

path="https://phidata-public.s3.amazonaws.com/demo_data/IMDB-Movie-Data.csv",

description="Contains information about IMDB movies.",

)

],

pip_install=True,

show_tool_calls=True,

)

python_assistant.print_response("What is the average rating of movies?", markdown=True)-

• Install pandas and run

python_assistant.py

pip install pandas

python python_assistant.pyAssistant for Data Analysis Using SQL

DuckDbAssistant can perform data analysis using SQL.

-

• Create a file

data_assistant.py

import json

from phi.assistant.duckdb import DuckDbAssistant

duckdb_assistant = DuckDbAssistant(

semantic_model=json.dumps({

"tables": [

{

"name": "movies",

"description": "Contains information about IMDB movies.",

"path": "https://phidata-public.s3.amazonaws.com/demo_data/IMDB-Movie-Data.csv",

}

]

}),

)

duckdb_assistant.print_response("What is the average rating of movies? Show me SQL.", markdown=True)-

• Install duckdb and run

data_assistant.py

pip install duckdb

python data_assistant.pyAssistant that Can Generate Pydantic Models

One of our favorite LLM features is generating structured data (i.e., Pydantic models) from text. Use this feature to extract features, generate movie scripts, create fake data, etc.

Let’s create a movie assistant to write a MovieScript for us.

-

• Create a file

movie_assistant.py

from typing import List

from pydantic import BaseModel, Field

from rich.pretty import pprint

from phi.assistant import Assistant

class MovieScript(BaseModel):

setting: str = Field(..., description="Provide a nice setting for the blockbuster.")

ending: str = Field(..., description="The ending of the movie. If unavailable, provide a happy ending.")

genre: str = Field(..., description="The genre of the movie. If unavailable, choose action, thriller, or romantic comedy.")

name: str = Field(..., description="Give this movie a name")

characters: List[str] = Field(..., description="The names of the characters in the movie.")

storyline: str = Field(..., description="The 3-sentence plot of the movie. Make it exciting!")

movie_assistant = Assistant(

description="You help write movie scripts.",

output_model=MovieScript,

)

pprint(movie_assistant.run("New York"))-

• Run the

movie_assistant.pyfile

python movie_assistant.py-

• The output is an object of the

MovieScriptclass, as follows:

MovieScript(

│ setting='A bustling and vibrant New York City',

│ ending='The protagonist saves the city and reconciles with estranged family.',

│ genre='Action',

│ name='City Pulse',

│ characters=['Alex Mercer', 'Nina Castillo', 'Detective Mike Johnson'],

│ storyline='In the heart of New York City, former cop turned vigilante Alex Mercer teams up with street-smart activist Nina Castillo to take down corrupt politicians threatening to destroy the city. They navigate a complex web of power and deception, uncovering shocking truths that push them to their limits. With time running out, they must race against the clock to save New York and confront their own demons.'

)PDF Assistant with Knowledge and Storage

Let’s create a PDF assistant to answer questions from PDFs. We will use PgVector for knowledge and storage.

Knowledge Base: The assistant can search for information to improve its responses (using a vector database).

Storage: Provides the assistant with long-term memory (using a database).

-

1. Run PgVector

Install Docker Desktop and run the following command on port 5532 to run PgVector:

docker run -d \

-e POSTGRES_DB=ai \

-e POSTGRES_USER=ai \

-e POSTGRES_PASSWORD=ai \

-e PGDATA=/var/lib/postgresql/data/pgdata \

-v pgvolume:/var/lib/postgresql/data \

-p 5532:5432 \

--name pgvector \

phidata/pgvector:16-

2. Create a PDF Assistant

-

• Create a file

pdf_assistant.py

import typer

from rich.prompt import Prompt

from typing import Optional, List

from phi.assistant import Assistant

from phi.storage.assistant.postgres import PgAssistantStorage

from phi.knowledge.pdf import PDFUrlKnowledgeBase

from phi.vectordb.pgvector import PgVector2

db_url = "postgresql+psycopg://ai:ai@localhost:5532/ai"

knowledge_base = PDFUrlKnowledgeBase(

urls=["https://phi-public.s3.amazonaws.com/recipes/ThaiRecipes.pdf"],

vector_db=PgVector2(collection="recipes", db_url=db_url),

)

# Uncomment on first run

knowledge_base.load()

storage = PgAssistantStorage(table_name="pdf_assistant", db_url=db_url)

def pdf_assistant(new: bool = False, user: str = "user"):

run_id: Optional[str] = None

if not new:

existing_run_ids: List[str] = storage.get_all_run_ids(user)

if len(existing_run_ids) > 0:

run_id = existing_run_ids[0]

assistant = Assistant(

run_id=run_id,

user_id=user,

knowledge_base=knowledge_base,

storage=storage,

# Show tool calls in responses

show_tool_calls=True,

# Enable assistant to search the knowledge base

search_knowledge=True,

# Enable assistant to read chat history

read_chat_history=True,

)

if run_id is None:

run_id = assistant.run_id

print(f"Starting run: {run_id}\n")

else:

print(f"Continuing run: {run_id}\n")

# Run the assistant as a CLI application

assistant.cli_app(markdown=True)

if __name__ == "__main__":

typer.run(pdf_assistant)-

3. Install the libraries

pip install -U pgvector pypdf "psycopg[binary]" sqlalchemy-

4. Run the PDF Assistant

python pdf_assistant.py-

• Ask a question:

How to make Thai fried noodles?-

• See how the assistant searches the knowledge base and returns a response.

-

• Type

byeto exit, then restart the assistant withpython pdf_assistant.pyand ask:

What was my last message?See how the assistant now maintains storage between sessions.

-

• Run the

pdf_assistant.pyfile with the--newflag to start a new run.

python pdf_assistant.py --newCheck the cookbook for more examples.

Next Steps

-

1. Read the basics to learn more about Phidata.

-

2. Read about assistants and learn how to customize them.

-

3. Check the cookbook for in-depth examples and code.

Demonstrations

Check out the following AI applications built using Phidata:

-

• PDF AI for summarizing and answering questions from PDFs.

-

• ArXiv AI for answering questions about ArXiv papers using the ArXiv API.

-

• HackerNews AI for summarizing stories, users, and sharing new trends on HackerNews.

Tutorials

Finding like-minded individuals is difficult, self-cultivation is also hard

Seize the opportunity of cutting-edge technology and become an innovative super individual with us

(Harnessing personal power in the AIGC era)

— End —

Click here👇 to follow me, and remember to star it~

One-click triple connection “Share”, “Like” and “View”

Daily updates on cutting-edge technological advancements ~