Hello everyone, I am Mu Yi, an internet technology product manager who continuously focuses on the AI field, a top 2 undergraduate in China, a top 10 CS graduate student in the US, and an MBA. I firmly believe that AI is the “power-up” for ordinary people, which is why I created the WeChat public account “AI Information Gap,” focusing on sharing comprehensive AI knowledge, including but not limited to AI popular science, AI tool evaluations, AI efficiency improvements, and AI industry insights. Follow me, and you won’t get lost on the AI journey; we will continue our journey in 2025.

This is a reading list published by the AI engineering community Latent Space, featuring 50 highly valuable papers, models, and blogs in the AI engineering field, covering the ten core modules of AI engineering, aimed at helping AI engineers and enthusiasts build a systematic AI knowledge system and enhance practical skills.

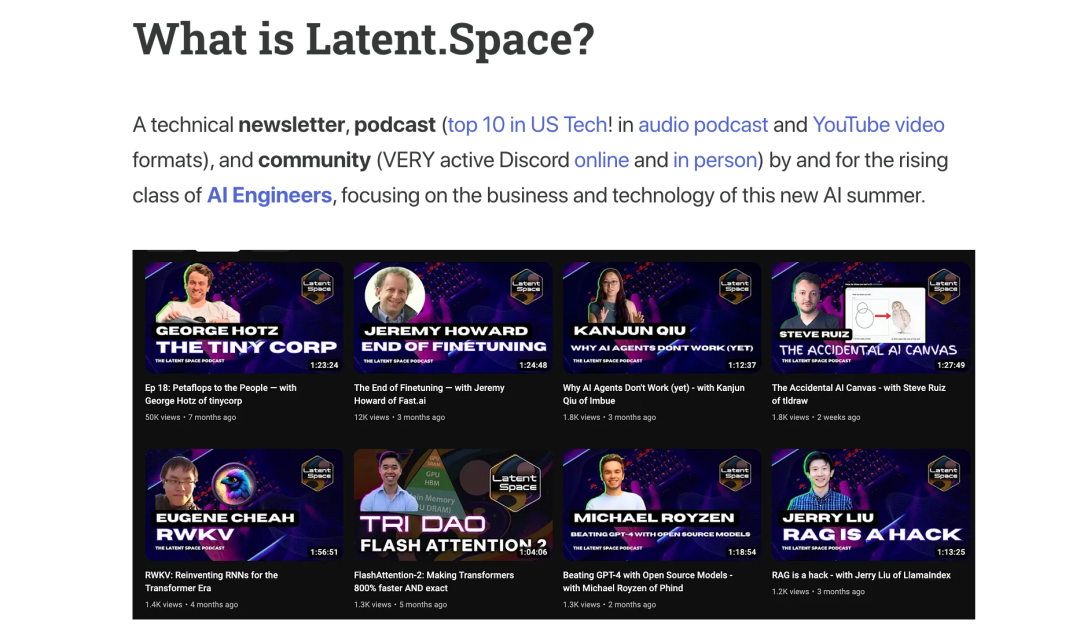

About Latent Space

Latent Space is a technical community focused on the AI engineering field, known for its high-quality newsletters, top podcasts (ranked in the top ten among tech podcasts in the US!), and active online and offline communities. It is hailed as the “number one AI engineering podcast.” Latent Space has 13.1K followers on the X platform, including Elon Musk and renowned podcast host Lex Fridman!

Without further ado, let’s get started.

1. Cutting-Edge LLMs

This section focuses on large language models (LLMs), including the GPT series (especially the GPT-4o system card), Claude 3 series, Gemini series, and open-source models like LLaMA series.

List:

OpenAI Series: Leading Multiple Technological Innovations

-

GPT-1: https://cdn.openai.com/research-covers/language-unsupervised/language_understanding_paper.pdf(Kicked off a new era of pre-trained language models) -

GPT-2: https://cdn.openai.com/better-language-models/language_models_are_unsupervised_multitask_learners.pdf(Demonstrated the capabilities of large language models) -

GPT-3: https://arxiv.org/abs/2005.14165(Milestone model with few-shot learning capabilities) -

Codex: https://arxiv.org/abs/2107.03374(Focused on code generation) -

InstructGPT: https://arxiv.org/abs/2203.02155(Enhanced instruction-following capability through reinforcement learning from human feedback) -

GPT-4 Technical Report: https://arxiv.org/abs/2303.08774(Classic multimodal model) -

GPT 3.5: https://openai.com/index/chatgpt/(The model behind ChatGPT) -

GPT-4o: https://openai.com/index/hello-gpt-4o/(Latest release supporting stronger multimodal real-time interactions) -

o1: https://openai.com/index/introducing-openai-o1-preview/(First-generation inference model) -

o3: https://openai.com/index/deliberative-alignment/(Second-generation inference model)

Anthropic Series: One of OpenAI’s Strong Competitors

-

Claude 3: https://www-cdn.anthropic.com/de8ba9b01c9ab7cbabf5c33b80b7bbc618857627/Model_Card_Claude_3.pdf(Excels in multiple evaluation benchmarks) -

Claude 3.5 Sonnet: https://www.anthropic.com/news/claude-3-5-sonnet(Latest model with further improvements in performance and speed)

Google Series: Outstanding Performance in Multimodal Aspects

-

Gemini 1: https://arxiv.org/abs/2312.11805(Multimodal large model supporting various inputs like text, images, and audio) -

Gemini 2.0 Flash: https://blog.google/technology/google-deepmind/google-gemini-ai-update-december-2024/#gemini-2-0-flash(A lighter and faster version) -

Gemini 2.0 Flash Thinking: https://ai.google.dev/gemini-api/docs/thinking-mode(Unlocking the model’s reasoning capabilities) -

Gemma 2: https://arxiv.org/abs/2408.00118(Google’s latest open-source model)

Meta Series: Open Source and High Performance

-

LLaMA: https://arxiv.org/abs/2302.13971(Pioneer of open-source large language models) -

Llama 2: https://arxiv.org/abs/2307.09288(Significantly improved performance, supports commercial use) -

Llama 3: https://arxiv.org/abs/2407.21783(Latest open-source model reaching current SOTA levels)

Mistral AI Series: Europe’s OpenAI

-

Mistral 7B: https://arxiv.org/abs/2310.06825(A small yet elegant example) -

Mixtral of Experts: https://arxiv.org/abs/2401.04088(Utilizes MOE architecture) -

Pixtral 12B: https://arxiv.org/abs/2410.07073(A multimodal model with 12 billion parameters)

DeepSeek Series: The Rising Star in China’s AI Field

-

DeepSeek V1: https://arxiv.org/abs/2401.02954(First generation of DeepSeek) -

DeepSeek Coder: https://arxiv.org/abs/2401.14196(Focused on code generation) -

DeepSeek MoE: https://arxiv.org/abs/2401.06066(DeepSeek MoE) -

DeepSeek V2: https://arxiv.org/abs/2405.04434(Second generation of DeepSeek) -

DeepSeek V3: https://github.com/deepseek-ai/DeepSeek-V3(DeepSeek’s latest and most powerful model)

Apple Series: Edge Intelligence

-

Apple Intelligence: https://arxiv.org/abs/2407.21075(Apple’s entry into edge intelligence)

2. Benchmark Testing and Evaluation

How do we objectively measure the “IQ” of AI models? This section will introduce mainstream model evaluation benchmark tests and evaluation frameworks. Just like students’ exams in the real world, benchmark tests can objectively assess AI models’ abilities in specific tasks, helping us better understand the strengths and weaknesses of the models.

List:

General Knowledge and Reasoning Ability Assessment

Evaluating the model’s understanding and reasoning abilities across various academic disciplines.

-

MMLU (Massive Multitask Language Understanding): https://arxiv.org/abs/2009.03300(One of the most widely used knowledge tests, covering 57 subjects including humanities, STEM, social sciences, etc.) -

MMLU Pro (Professional-Level MMLU): https://arxiv.org/abs/2406.01574(Upgraded version of MMLU, more challenging and closer to professional-level testing) -

GPQA & GPQA Diamond: https://arxiv.org/abs/2311.12022(Tests for graduate-level questions, with extremely high question quality and difficulty, GPQA Diamond is its enhanced version) -

BIG-Bench: https://arxiv.org/abs/2206.04615(Contains over 200 different types of tasks to comprehensively evaluate the model’s various capabilities) -

BIG-Bench Hard: https://arxiv.org/abs/2210.09261(Enhanced version of BIG-Bench, filtering the most challenging tasks)

Long Text Reasoning Ability Assessment

Evaluating the model’s ability to handle long texts and perform complex reasoning.

-

MuSR (Multi-Step Reasoning): https://arxiv.org/abs/2310.16049(Evaluates the model’s ability to perform multi-step reasoning in long documents) -

LongBench: https://arxiv.org/abs/2412.15204(Multi-task, bilingual, long text comprehension benchmark test) -

BABILong: https://arxiv.org/abs/2406.10149(Synthetic long text reasoning dataset) -

Lost in the Middle: https://arxiv.org/abs/2307.03172(Studies the utilization of information in long texts) -

Needle in a Haystack: https://github.com/gkamradt/LLMTest_NeedleInAHaystack(“Needle in a Haystack” test, evaluating the model’s ability to extract key information from long texts)

Mathematical Ability Assessment

Evaluating the model’s mathematical reasoning and problem-solving abilities.

-

MATH: https://arxiv.org/abs/2103.03874(Contains 12,500 competition-level math problems covering algebra, geometry, probability, and more) -

AIME (American Invitational Mathematics Examination): https://www.kaggle.com/datasets/hemishveeraboina/aime-problem-set-1983-2024(American Mathematics Invitational, difficulty between AMC and IMO) -

FrontierMath: https://arxiv.org/abs/2411.04872(Focuses more on advanced mathematical reasoning abilities, such as college math competition problems) -

AMC10 & AMC12: https://github.com/ryanrudes/amc(American Math Competitions, AMC10 is for students in grade 10 and below, AMC12 is for students in grade 12 and below)

Instruction Following Ability Assessment

Evaluating the model’s ability to understand and execute instructions.

-

IFEval (Instruction Following Evaluation): https://arxiv.org/abs/2311.07911(Evaluates the model’s ability to follow various types of instructions) -

MT-Bench (Multi-Turn Benchmark): https://arxiv.org/abs/2306.05685(Evaluates the model’s instruction-following ability in multi-turn dialogue scenarios)

Abstract Reasoning Ability Assessment

Evaluating the model’s abstract reasoning and pattern recognition capabilities.

-

ARC AGI (Abstraction and Reasoning Corpus): https://arcprize.org/arc(Evaluates the model’s general intelligence, challenging the model to perform abstract reasoning like humans)

3. Prompt Engineering, Context Learning, and Chain of Thought

How can we guide models to generate results that better meet our needs through prompt techniques? This section introduces prompt engineering, in-context learning (ICL), and chain of thought (CoT) techniques to help us interact better with AI models.

List:

Practical Tutorials

-

The Prompt Report: https://arxiv.org/abs/2406.06608(Latest comprehensive report on prompt engineering) -

Lilian Weng’s Blog: https://lilianweng.github.io/posts/2023-03-15-prompt-engineering/(Systematic summary of prompt engineering by Lilian Weng) -

Eugene Yan’s Blog: https://eugeneyan.com/writing/prompting/(Prompt engineering tips shared by Eugene Yan) -

Anthropic’s Prompt Engineering Tutorial: https://github.com/anthropics/prompt-eng-interactive-tutorial(Anthropic’s official step-by-step guide to building effective prompts) -

AI Engineer Workshop: https://www.youtube.com/watch?v=hkhDdcM5V94(Video sharing practical experiences in prompt engineering)

Core Technologies

-

Chain-of-Thought (CoT): https://arxiv.org/abs/2201.11903(Pioneering work on chain of thought technology) -

Scratchpads: https://arxiv.org/abs/2112.00114(Provides the model with “scratch paper” to enhance its reasoning ability) -

Let’s Think Step By Step: https://arxiv.org/abs/2205.11916(Classic prompt, a hallmark phrase of chain of thought technology) -

Tree of Thoughts (ToT): https://arxiv.org/abs/2305.10601(Thought tree, enhances the model’s reasoning and planning capabilities) -

Prompt Tuning: https://aclanthology.org/2021.emnlp-main.243/(Soft prompt, adjusts the model’s behavior) -

Prefix-Tuning: https://arxiv.org/abs/2101.00190(Adds trainable prefixes to fine-tune model outputs) -

Adjust Decoding: https://arxiv.org/abs/2402.10200(Improves model performance by adjusting decoding strategies) -

Representation Engineering: https://vgel.me/posts/representation-engineering/(Representation engineering, guides generation by directly modifying the model’s hidden states)

Automatic Prompt Engineering

-

Automatic Prompt Engineering (APE): https://arxiv.org/abs/2211.01910(Automatically generate and optimize prompts) -

DSPy: https://arxiv.org/abs/2310.03714(DSPy framework, builds complex AI systems through programming rather than manually writing prompts)

4. Retrieval-Augmented Generation (RAG)

RAG, short for Retrieval-Augmented Generation, combines the strengths of retrieval and generative models, utilizing external knowledge bases to enhance model performance. This section introduces Meta’s RAG paper, MTEB embedding benchmark tests, GraphRAG, and the RAGAS evaluation framework. Vector databases are also recommended as important infrastructure for current RAG applications.

List:

Basic Theory

-

Introduction to Information Retrieval: https://nlp.stanford.edu/IR-book/information-retrieval-book.html(A classic textbook in the field of information retrieval) -

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks: https://arxiv.org/abs/2005.11401(Meta’s RAG paper, pioneering work on RAG technology) -

RAG 2.0: https://contextual.ai/introducing-rag2/(Evolution of RAG technology)

Core Technologies

-

HyDE (Hypothetical Document Embeddings): https://docs.llamaindex.ai/en/stable/optimizing/advanced_retrieval/query_transformations/(Enhances query effectiveness through hypothetical documents) -

Chunking: https://research.trychroma.com/evaluating-chunking(Chunking strategies) -

Rerank: https://cohere.com/blog/rerank-3pt5(Re-ranking to optimize retrieval result ordering) -

MTEB (Massive Text Embedding Benchmark): https://arxiv.org/abs/2210.07316(Benchmark test for evaluating text embedding model performance)

Advanced Technologies

-

GraphRAG: https://arxiv.org/pdf/2404.16130(Combines knowledge graphs with RAG to enhance RAG’s knowledge reasoning capabilities) -

RAGAS: https://arxiv.org/abs/2309.15217(Automated framework for evaluating RAG system performance)

Practical Guides

-

LlamaIndex: https://docs.llamaindex.ai/en/stable/understanding/rag/(Practical tutorials and tools for RAG provided by LlamaIndex) -

LangChain: https://python.langchain.com/docs/tutorials/rag/(Integration solutions and sample code for RAG provided by LangChain)

5. AI Agents

The big trend for 2025, the future form of AI, capable of perceiving the environment, making decisions, and taking actions like humans. This section introduces important papers related to AI agents such as SWE-Bench, ReAct, MemGPT, Voyager.

-

List:

Benchmark Testing

-

SWE-Bench: https://arxiv.org/abs/2310.06770(Evaluates agents’ ability to solve real-world GitHub software engineering problems) -

SWE-Agent: https://arxiv.org/abs/2405.15793(Software engineer agent based on LLM) -

SWE-Bench Multimodal: https://arxiv.org/abs/2410.03859(Multimodal SWE-Bench) -

Konwinski Prize: https://kprize.ai/(Rewards agents with outstanding contributions to software engineering automation)

Core Technologies

-

ReAct: Synergizing Reasoning and Acting in Language Models: https://arxiv.org/abs/2210.03629(ReAct framework, combining reasoning and acting) -

MemGPT: Towards LLMs as Operating Systems: https://arxiv.org/abs/2310.08560(Empowering agents with long-term memory capabilities) -

MetaGPT: The Multi-Agent Framework: https://arxiv.org/abs/2308.00352(Multi-agent meta-programming framework, enabling multiple agents to work together like a team through role assignment and collaboration) -

AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation: https://arxiv.org/abs/2308.08155(Microsoft’s open-source framework supporting the construction of complex LLM applications by defining and combining multiple agents) -

Smallville: Generative Agents: Interactive Simulacra of Human Behavior: https://arxiv.org/abs/2304.03442&https://github.com/joonspk-research/generative_agents(From Stanford and Google, creating simulated agents with social behaviors) -

Voyager: An Open-Ended Embodied Agent with Large Language Models: https://arxiv.org/abs/2305.16291(NVIDIA’s Minecraft agent, capable of continuous learning, exploring, and discovering in the Minecraft world) -

Agent Workflow Memory: https://arxiv.org/abs/2409.07429(Improves planning and execution capabilities of agents by introducing workflow memory mechanisms)

Practical Guides

-

Building Effective Agents: https://www.anthropic.com/research/building-effective-agents(Anthropic shares practical experiences and thoughts on building effective agents) -

OpenAI Swarm: https://github.com/openai/swarm(OpenAI’s multi-agent tools)

6. Code Generation

This section introduces The Stack code dataset, HumanEval/Codex benchmark tests, AlphaCodium papers, etc.

-

List:

Datasets

-

The Stack: https://arxiv.org/abs/2211.15533(Large-scale, multilingual source code dataset, 3 TB)

Code Generation Models

-

DeepSeek-Coder: https://arxiv.org/abs/2401.14196(DeepSeek-Coder model paper) -

Code Llama: https://ai.meta.com/research/publications/code-llama-open-foundation-models-for-code/(A series of code generation models open-sourced by Meta) -

Qwen2.5-Coder: https://arxiv.org/abs/2409.12186(Code generation model from the Qwen2.5 series) -

AlphaCodium: https://arxiv.org/abs/2401.08500(Code generation model developed by DeepMind)

Evaluation Benchmarks

-

HumanEval/Codex: https://arxiv.org/abs/2107.03374(Evaluates the code generation model’s ability to solve basic programming problems) -

Aider: https://aider.chat/docs/leaderboards/(Aider’s compilation of multiple code generation benchmark leaderboards) -

Codeforces: https://arxiv.org/abs/2312.02143(Used to evaluate the model’s competitive programming abilities) -

BigCodeBench: https://huggingface.co/spaces/bigcode/bigcodebench-leaderboard(Multi-dimensional evaluation suite for code generation launched by the BigCode project) -

LiveCodeBench: https://livecodebench.github.io/(Focuses on the correctness and runtime behavior of code generation model outputs) -

SciCode: https://buttondown.com/ainews/archive/ainews-to-be-named-5745/(Evaluates the performance of code generation models in the scientific computing field)

AI Code Review

-

CriticGPT: https://criticgpt.org/criticgpt-openai/(A tool used by OpenAI to help human programmers identify code defects)

7. Visual Models

This section introduces visual models such as CLIP, Segment Anything Model, and the development trends of multimodal large models.

-

List:

Object Detection

-

YOLO (You Only Look Once): https://arxiv.org/abs/1506.02640(Classic object detection model, known for its speed and accuracy) -

DETRs Beat YOLOs on Object Detection: https://arxiv.org/abs/2304.08069(DETR series models, a Transformer-based object detection method with superior performance)

Visual-Language Pre-Training

-

CLIP (Contrastive Language-Image Pre-training): https://arxiv.org/abs/2103.00020(OpenAI’s milestone work, linking images and text through contrastive learning) -

MMVP Benchmark: Multimodal Video Pretraining for Video Action Recognition: https://arxiv.org/abs/2401.06209(Multimodal video benchmark testing)

Image Segmentation

-

Segment Anything Model (SAM): https://arxiv.org/abs/2304.02643(Meta’s image segmentation model, capable of segmenting any object in an image through prompts)

Multimodal Large Models

-

Flamingo: a Visual Language Model for Few-Shot Learning: https://huyenchip.com/2023/10/10/multimodal.html(DeepMind’s multimodal model supporting few-shot learning) -

Chameleon: Mixed-Modal Early-Fusion Foundation Models: https://arxiv.org/abs/2405.09818(Meta’s multimodal model, adopting an early fusion approach) -

GPT-4V system card: https://cdn.openai.com/papers/GPTV_System_Card.pdf(System card for GPT-4V)

8. Speech Models

From speech recognition to speech synthesis, AI is changing the way we interact with machines. This section introduces speech models such as Whisper, AudioPaLM, NaturalSpeech, and related application cases.

-

List:

Speech Recognition (ASR)

-

Whisper: https://arxiv.org/abs/2212.04356(OpenAI’s open-source speech recognition model, supporting multiple languages)

Speech Synthesis (TTS)

-

NaturalSpeech: https://arxiv.org/abs/2205.04421(Microsoft’s high-quality speech synthesis model)

Speech Large Model

-

AudioPaLM: https://audiopalm.github.io/(Google’s audio-text multimodal large model, capable of processing and generating audio and text content)

Real-Time Speech Technology

-

Kyutai Moshi: https://arxiv.org/html/2410.00037v2(Open-source model supporting full-duplex speech-text conversion, low latency) -

OpenAI Realtime API: https://platform.openai.com/docs/guides/realtime(OpenAI’s real-time API)

9. Image/Video Models

Stable Diffusion, Sora, and other generative models showcase the tremendous potential of AI in image and video generation. This section introduces papers related to image and video models, as well as tools like ComfyUI.

-

List:

Diffusion Models

-

Latent Diffusion Models: https://arxiv.org/abs/2112.10752(Core technology of Stable Diffusion) -

Consistency Models: https://arxiv.org/abs/2303.01469(Introduces consistency constraints to accelerate the sampling speed of diffusion models, significantly reducing sampling steps) -

DiT (Diffusion Transformers): https://arxiv.org/abs/2212.09748(Core technology of Sora, applies Transformer architecture to diffusion models, laying the foundation for generating high-quality videos)

Image Generation Models

-

DALL-E: https://arxiv.org/abs/2102.12092(OpenAI’s pioneering work, generating images based on textual descriptions) -

DALL-E 2: https://arxiv.org/abs/2204.06125(An upgraded version of DALL-E, with higher resolution and quality of generated images) -

DALL-E 3: https://cdn.openai.com/papers/dall-e-3.pdf(Further improves image generation quality and better understands and follows textual descriptions) -

Imagen: https://arxiv.org/abs/2205.11487(Google’s text-to-image generation model) -

Imagen 2: https://deepmind.google/technologies/imagen-2/(The upgraded version of Imagen, supporting more diverse image editing features) -

Imagen 3: https://arxiv.org/abs/2408.07009(Google’s latest image generation model)

Video Generation Models

-

Sora: https://openai.com/index/sora/(OpenAI’s text-to-video generation model, now released)

Tools

-

ComfyUI: https://github.com/comfyanonymous/ComfyUI(Node-based Stable Diffusion WebUI, providing a flexible and controllable image and video generation process)

10. Model Fine-Tuning

How to customize models according to specific needs in specific domains? This section introduces LoRA/QLoRA, DPO and other fine-tuning techniques, as well as how to utilize these techniques to enhance model performance.

-

List:

Parameter-Efficient Fine-Tuning (PEFT)

-

LoRA: Low-Rank Adaptation of Large Language Models: https://arxiv.org/abs/2106.09685(Classic work on parameter-efficient fine-tuning, achieving efficient fine-tuning by inserting a small number of trainable parameters in large language models through low-rank adapters) -

QLoRA: Efficient Finetuning of Quantized LLMs: http://arxiv.org/abs/2305.14314(Combines LoRA with 4-bit quantization, further reducing the computational resources required for fine-tuning)

Preference Alignment Fine-Tuning

-

DPO: Direct Preference Optimization: Your Language Model is Secretly a Reward Model: https://arxiv.org/abs/2305.18290(An algorithm for direct optimization strategy, aligning LLMs with human preferences without training a reward model) -

ReFT: Representation Finetuning for Language Models: https://arxiv.org/abs/2404.03592(Aligning models by fine-tuning the hidden layer representations, serving as a complement to DPO)

Data Construction

-

Orca 3/AgentInstruct: Agentic Instruction Generation: https://www.microsoft.com/en-us/research/blog/orca-agentinstruct-agentic-flows-can-be-effective-synthetic-data-generators/(Utilizes agents to generate instruction data for model fine-tuning)

Reinforcement Learning Fine-Tuning (RL Fine-Tuning)

-

RL Finetuning for o1: https://www.interconnects.ai/p/openais-reinforcement-finetuning(OpenAI’s recent reinforcement learning-based fine-tuning technology) -

Let’s Verify Step By Step: https://arxiv.org/abs/2305.20050(Enhances the effect of RLHF through a step-by-step verification approach)

Tutorials

-

How to fine-tune open LLMs: https://www.philschmid.de/fine-tune-llms-in-2025(A practical LLM fine-tuning tutorial)

Conclusion

Isn’t it a joy to learn and practice?!

Selected Recommendations

-

Domestic Alipay opens ChatGPT Plus and Claude Pro 2024 latest tutorial! -

“AI Caregiver Level Tutorial” No phone number required! Register ChatGPT account in three minutes! 2024 latest tutorial! -

“AI Caregiver Level Tutorial” Step-by-step guide to registering a Claude account! Recommended for collection!

If you have read this far, please give a thumbs up to encourage me. A single like can lead to a million-dollar salary! 😊👍👍👍. Follow me, and you won’t get lost on the AI journey; original technical articles will be pushed promptly 🤖.